Introduction

Prompt Engineering Tools are platforms designed to help users create, test, optimize, and manage prompts for large language models. These tools play a critical role in improving the quality, accuracy, and consistency of AI-generated outputs by enabling structured prompt design and evaluation.

As generative AI adoption grows, prompt engineering has become a core skill for developers, marketers, and AI teams. These tools simplify experimentation, allow version control of prompts, and provide insights into how prompts perform across different models and use cases.

Real-world use cases include:

- Optimizing prompts for chatbots and assistants

- Testing AI outputs for accuracy and consistency

- Building prompt templates for marketing content

- Evaluating LLM performance across scenarios

- Managing prompt libraries for teams

Key evaluation criteria for buyers:

- Prompt testing and experimentation capabilities

- Version control and prompt management

- Integration with LLM APIs

- Evaluation and analytics features

- Collaboration and team workflows

- Ease of use and interface

- Scalability for enterprise use

- Security and compliance

- Support for multiple models

- Cost and value

Best for:

Prompt engineering tools are ideal for AI engineers, developers, marketers, and product teams working with generative AI.

Not ideal for:

Users who only need basic prompt usage without structured testing or optimization.

Key Trends in Prompt Engineering Tools

- Prompt versioning and lifecycle management

- A/B testing for prompt optimization

- Integration with multiple LLM providers

- Automated prompt evaluation systems

- Low-code and no-code prompt builders

- Collaboration tools for prompt teams

- Analytics-driven prompt improvement

- Integration with LLM orchestration frameworks

- Reusable prompt templates and libraries

- Focus on reliability and reproducibility

How We Selected These Tools (Methodology)

- Evaluated prompt testing and optimization capabilities

- Assessed integration with LLM APIs

- Reviewed version control and management features

- Checked analytics and evaluation tools

- Considered ease of use and UI/UX

- Examined collaboration and team features

- Evaluated scalability and enterprise readiness

- Reviewed community adoption and ecosystem

- Considered open-source vs managed tools

- Ensured applicability across individual users to enterprises

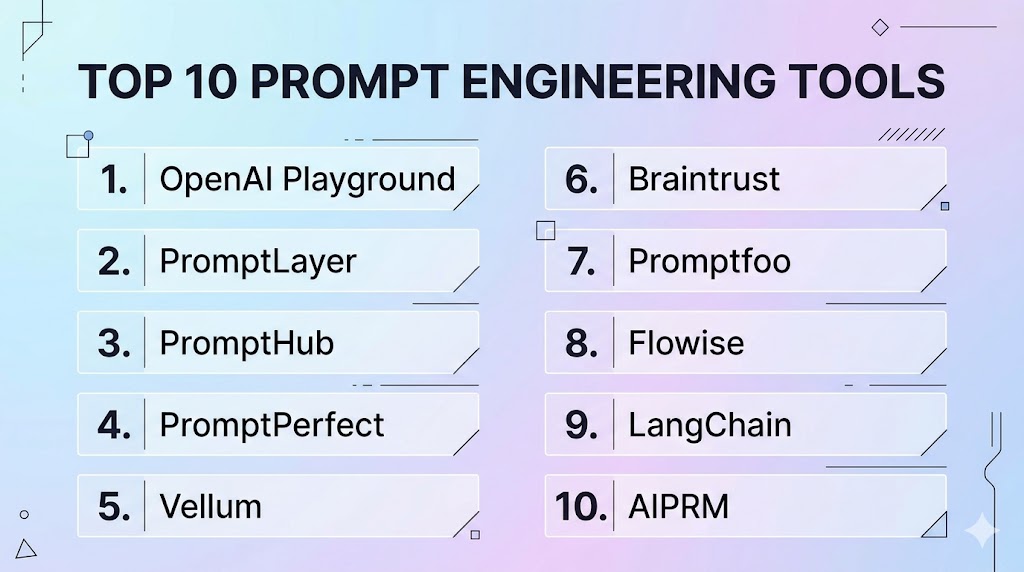

Top 10 Prompt Engineering Tools

#1 — OpenAI Playground

Short description : OpenAI Playground allows users to experiment with prompts and test outputs interactively using advanced language models.

Key Features

- Prompt testing

- Parameter tuning

- Real-time output

- Model selection

- Interactive UI

- Multi-language support

Pros

- Easy experimentation

- Flexible

Cons

- Limited collaboration

- No version control

Platforms / Deployment

- Web / Cloud

Security & Compliance

- Standard controls

Integrations & Ecosystem

- APIs

Support & Community

- Large community

#2 — PromptLayer

Short description: PromptLayer provides prompt logging, versioning, and monitoring for LLM applications.

Key Features

- Prompt versioning

- Logging

- Analytics

- API integration

- Monitoring

Pros

- Strong tracking

- Developer-friendly

Cons

- Requires setup

- Limited UI

Platforms / Deployment

- Cloud

Security & Compliance

- Standard controls

Integrations & Ecosystem

- APIs

Support & Community

- Growing community

#3 — PromptHub

Short description: PromptHub helps teams collaborate on prompt creation and optimization.

Key Features

- Prompt management

- Collaboration tools

- Version control

- Testing workflows

- Template library

Pros

- Team collaboration

- Easy management

Cons

- Paid features

- Limited integrations

Platforms / Deployment

- Cloud

Security & Compliance

- Standard controls

Integrations & Ecosystem

- APIs

Support & Community

- Active community

#4 — PromptPerfect

Short description: PromptPerfect optimizes prompts automatically for better AI outputs.

Key Features

- Prompt optimization

- AI-assisted refinement

- Multi-model support

- Output improvement

- Automation

Pros

- Easy optimization

- Saves time

Cons

- Limited customization

- Paid

Platforms / Deployment

- Cloud

Security & Compliance

- Standard controls

Integrations & Ecosystem

- APIs

Support & Community

- Community support

#5 — Vellum

Short description: Vellum provides a platform for building and evaluating prompt-based applications.

Key Features

- Prompt testing

- Workflow building

- Evaluation tools

- Model integration

- Experiment tracking

Pros

- Structured workflows

- Developer-friendly

Cons

- Learning curve

- Paid

Platforms / Deployment

- Cloud

Security & Compliance

- Standard controls

Integrations & Ecosystem

- APIs

Support & Community

- Active community

#6 — Braintrust

Short description: Braintrust focuses on evaluating and improving LLM performance with testing frameworks.

Key Features

- Prompt evaluation

- Testing pipelines

- Performance analytics

- Experiment tracking

- Integration with models

Pros

- Strong evaluation

- Scalable

Cons

- Developer-focused

- Complex setup

Platforms / Deployment

- Cloud

Security & Compliance

- Standard controls

Integrations & Ecosystem

- APIs

Support & Community

- Growing community

#7 — Promptfoo

Short description: Promptfoo is an open-source tool for testing and evaluating prompts.

Key Features

- Prompt testing

- Evaluation metrics

- CLI-based workflows

- Multi-model support

- Open-source

Pros

- Free and flexible

- Developer-friendly

Cons

- No UI

- Requires setup

Platforms / Deployment

- Local / Cloud

Security & Compliance

- Depends on deployment

Integrations & Ecosystem

- APIs

Support & Community

- Open-source community

#8 — Flowise

Short description: Flowise provides a visual interface for building prompt-based workflows and LLM pipelines.

Key Features

- Drag-and-drop builder

- Prompt workflows

- LLM integration

- RAG support

- Visual UI

Pros

- Easy to use

- No-code friendly

Cons

- Limited scalability

- Basic features

Platforms / Deployment

- Cloud / Local

Security & Compliance

- Standard controls

Integrations & Ecosystem

- APIs

Support & Community

- Growing community

#9 — LangChain

Short description: LangChain includes prompt engineering capabilities as part of its orchestration framework.

Key Features

- Prompt templates

- Workflow orchestration

- Memory systems

- API integration

- Multi-agent support

Pros

- Highly flexible

- Large ecosystem

Cons

- Complex

- Requires coding

Platforms / Deployment

- Cloud / On-prem

Security & Compliance

- Depends on deployment

Integrations & Ecosystem

- APIs, databases

Support & Community

- Large community

#10 — AIPRM

Short description: AIPRM is a prompt management tool focused on reusable templates for content generation.

Key Features

- Prompt templates

- Content generation

- SEO prompts

- Easy integration

- Template sharing

Pros

- Easy to use

- Ready templates

Cons

- Limited advanced features

- Basic customization

Platforms / Deployment

- Web / Cloud

Security & Compliance

- Standard controls

Integrations & Ecosystem

- Browser tools

Support & Community

- Active community

Comparison Table

| Tool | Best For | Platform | Deployment | Standout Feature | Rating |

|---|---|---|---|---|---|

| Playground | Testing | Web | Cloud | Simplicity | N/A |

| PromptLayer | Tracking | Cloud | Cloud | Logging | N/A |

| PromptHub | Teams | Cloud | Cloud | Collaboration | N/A |

| PromptPerfect | Optimization | Cloud | Cloud | AI tuning | N/A |

| Vellum | Workflows | Cloud | Cloud | Structured testing | N/A |

| Braintrust | Evaluation | Cloud | Cloud | Analytics | N/A |

| Promptfoo | Devs | Local | Hybrid | Open-source | N/A |

| Flowise | No-code | Multi | Hybrid | Visual UI | N/A |

| LangChain | Advanced | Multi | Hybrid | Flexibility | N/A |

| AIPRM | Content | Web | Cloud | Templates | N/A |

Evaluation & Scoring

| Tool | Core | Ease | Integration | Security | Performance | Support | Value | Total |

|---|---|---|---|---|---|---|---|---|

| Playground | 8 | 9 | 7 | 7 | 8 | 8 | 8 | 7.9 |

| PromptLayer | 8 | 7 | 8 | 7 | 8 | 7 | 8 | 7.8 |

| PromptHub | 8 | 8 | 7 | 7 | 8 | 7 | 7 | 7.6 |

| PromptPerfect | 7 | 9 | 7 | 7 | 8 | 7 | 8 | 7.6 |

| Vellum | 9 | 7 | 8 | 8 | 8 | 8 | 7 | 8.0 |

| Braintrust | 9 | 6 | 8 | 8 | 9 | 8 | 7 | 8.1 |

| Promptfoo | 8 | 6 | 7 | 7 | 8 | 7 | 9 | 7.6 |

| Flowise | 7 | 9 | 7 | 7 | 7 | 7 | 8 | 7.4 |

| LangChain | 10 | 6 | 9 | 7 | 9 | 9 | 8 | 8.6 |

| AIPRM | 7 | 10 | 6 | 7 | 7 | 7 | 9 | 7.5 |

Which Prompt Engineering Tool Is Right for You?

Solo / Freelancer

OpenAI Playground or AIPRM is best for quick experimentation.

SMB

PromptPerfect or PromptHub offers ease and collaboration.

Mid-Market

Vellum or Flowise provides structured workflows.

Enterprise

LangChain or Braintrust delivers scalability and advanced features.

Frequently Asked Questions (FAQs)

What is a prompt engineering tool?

A prompt engineering tool helps design, test, and optimize prompts used with large language models. It enables users to improve output quality, manage prompt versions, and analyze performance. These tools are essential for building reliable AI applications.

Why is prompt engineering important?

Prompt engineering determines how effectively an AI model responds to user input. Well-structured prompts improve accuracy, reduce errors, and ensure consistent outputs. It is a key factor in successful AI implementation.

Can I use prompt tools without coding?

Yes, some tools like Flowise and PromptHub offer no-code or low-code interfaces. However, developer-focused tools like LangChain and Promptfoo may require coding knowledge.

Do these tools support multiple models?

Most prompt engineering tools support multiple LLM providers. This allows users to test prompts across different models and choose the best-performing one.

Are prompt engineering tools scalable?

Yes, enterprise tools provide scalability and can handle large volumes of prompt testing and optimization. They are designed for production environments.

Can these tools integrate with applications?

Yes, most tools offer APIs and integrations with applications, workflows, and orchestration frameworks. This enables seamless deployment of optimized prompts.

Are prompt engineering tools secure?

Security depends on the platform and deployment. Enterprise tools provide access control, encryption, and governance features for secure usage.

What industries use prompt engineering tools?

Industries such as marketing, finance, healthcare, and technology use these tools to optimize AI-driven workflows and content generation.

What are the limitations?

Limitations include dependency on model quality, complexity in setup, and the need for experimentation. Proper testing is required to achieve optimal results.

How to choose the right tool?

Choose based on your use case, technical expertise, and scalability needs. Testing tools with real workflows helps identify the best fit.

Conclusion

Prompt engineering tools have become essential for maximizing the performance and reliability of AI systems by enabling structured prompt design, testing, and optimization. Beginner-friendly tools like OpenAI Playground and AIPRM make it easy to experiment and generate content quickly, while platforms like PromptPerfect and PromptHub provide collaborative and optimization-focused capabilities for growing teams. Mid-market users benefit from tools like Vellum and Flowise, which combine workflow management with ease of use. Enterprises can leverage advanced platforms like LangChain and Braintrust to build scalable, production-ready AI systems with strong evaluation and monitoring capabilities. Choosing the right tool depends on your level of expertise, use case complexity, and integration needs. A practical approach is to experiment with multiple tools, refine prompts continuously, and build a structured prompt strategy for long-term success.