Introduction

Batch processing frameworks are software platforms that process large volumes of data in discrete chunks or “batches” rather than continuously. They are widely used for ETL (Extract, Transform, Load) jobs, reporting, financial calculations, data warehousing, and periodic analytics. Unlike real-time processing, batch processing is ideal when latency is not critical, but throughput and reliability are paramount.

Batch frameworks provide reliability, fault tolerance, and scalability, making them essential for organizations that need to process large datasets efficiently, often on scheduled intervals.

Real-world use cases include:

- Nightly ETL jobs loading data into warehouses.

- Aggregating sales, inventory, or financial data for reporting.

- Performing large-scale machine learning training on historical data.

- Running periodic compliance and audit reports.

- Data archival and backup transformations.

Key evaluation criteria for buyers:

- Scalability for large data volumes

- Fault tolerance and reliability

- Support for complex transformations and workflows

- Scheduling and orchestration capabilities

- Integration with data sources, warehouses, and cloud storage

- Performance monitoring and logging

- Ease of use for developers

- Security, governance, and compliance

- Cost-effectiveness

- Cloud, on-premises, or hybrid deployment options

Best for:

Batch processing frameworks are ideal for data engineers, analytics teams, and IT operations handling large datasets with predictable processing schedules.

Not ideal for:

Organizations that need real-time or low-latency processing for streaming data may require a real-time or stream processing framework instead.

Key Trends in Batch Processing Frameworks

- Unified batch and streaming frameworks for hybrid pipelines.

- Cloud-native batch processing for elastic scaling and reduced infrastructure management.

- Integration with big data ecosystems such as Hadoop, Spark, and cloud data warehouses.

- Enhanced scheduling and orchestration with workflow management tools.

- Support for AI and ML pipelines processing historical datasets.

- Observability and monitoring integrated for large-scale jobs.

- Low-code or high-level APIs to simplify pipeline development.

- Security and governance integrated into batch pipelines.

- Containerization and Kubernetes orchestration for modern deployment.

- Cost-optimization via spot instances or cloud resource scaling.

How We Selected These Tools (Methodology)

- Assessed processing speed and scalability for large datasets.

- Evaluated workflow orchestration and scheduling capabilities.

- Reviewed integration with storage, data lakes, and warehouses.

- Checked fault tolerance, logging, and monitoring features.

- Considered developer usability and API support.

- Examined security, governance, and compliance.

- Reviewed community support, documentation, and enterprise support options.

- Evaluated cloud, on-prem, and hybrid deployment flexibility.

- Factored cost-effectiveness and resource efficiency.

- Ensured relevance across SMB, mid-market, and enterprise contexts.

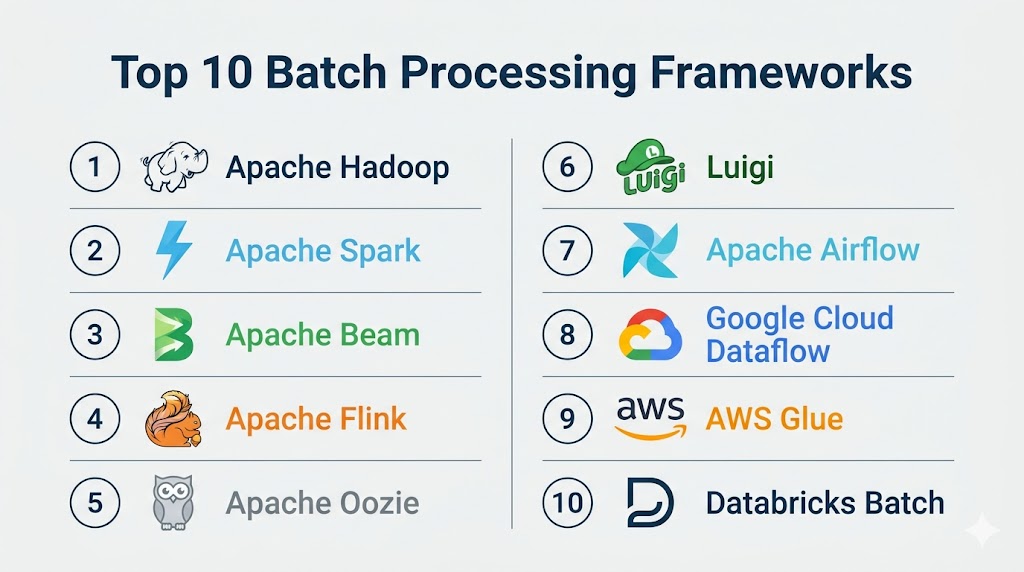

Top 10 Batch Processing Frameworks

#1 — Apache Hadoop

Short description: Hadoop is an open-source framework for distributed storage and batch processing of large datasets using the MapReduce programming model.

Key Features

- Distributed storage with HDFS

- MapReduce batch processing

- Fault tolerance with data replication

- Scalability across clusters

- Integration with Hive, Pig, and Spark

- Wide ecosystem of Hadoop tools

Pros

- Handles massive datasets

- Mature and well-supported

Cons

- Complex setup and maintenance

- MapReduce programming can be verbose

Platforms / Deployment

- Linux / Cloud / On-prem / Hybrid

Security & Compliance

- Kerberos authentication, HDFS permissions

- Compliance depends on deployment

Integrations & Ecosystem

- Hive, Pig, Spark, HBase, BI tools

Support & Community

- Large open-source community

- Enterprise support via Hadoop vendors

#2 — Apache Spark

Short description: Apache Spark is a unified analytics engine for batch and stream processing, optimized for large-scale data.

Key Features

- In-memory batch processing for speed

- APIs in Java, Scala, Python, R

- Fault-tolerant and scalable

- Integration with Hadoop, Hive, HDFS

- Advanced analytics support (MLlib, GraphX)

Pros

- Fast and flexible

- Supports batch, streaming, and machine learning

Cons

- Requires cluster management

- Memory-intensive workloads

Platforms / Deployment

- Linux / Cloud / On-prem / Hybrid

Security & Compliance

- Kerberos, SSL, RBAC

- Compliance depends on setup

Integrations & Ecosystem

- Hadoop, Kafka, Hive, BI tools

Support & Community

- Large open-source community

- Managed offerings (Databricks)

#3 — Apache Beam

Short description: Apache Beam provides a unified programming model for batch and stream processing across multiple execution engines.

Key Features

- Unified APIs for batch and streaming

- Multiple execution engines (Flink, Spark, Dataflow)

- Windowing and event-time processing

- SDKs in Java, Python, Go

Pros

- Flexible execution across engines

- Simplifies hybrid pipelines

Cons

- Requires execution engine for runtime

- Steep learning curve

Platforms / Deployment

- Linux / Cloud / On-prem / Hybrid

Security & Compliance

- Engine-dependent

- Supports encryption and access controls

Integrations & Ecosystem

- Kafka, cloud storage, warehouses, BI tools

Support & Community

- Open-source community

- Documentation and examples

#4 — Apache Flink

Short description: Flink offers both batch and stream processing, with low-latency and high-throughput capabilities.

Key Features

- Unified batch/stream processing

- Fault tolerance and distributed execution

- Event-time processing and windowing

- APIs for Java, Scala, Python

Pros

- High performance

- Supports complex batch analytics

Cons

- Operational complexity

- Learning curve for batch pipelines

Platforms / Deployment

- Linux / Cloud / On-prem / Hybrid

Security & Compliance

- Depends on deployment

- Supports encryption

Integrations & Ecosystem

- Hadoop, Kafka, storage systems

Support & Community

- Open-source community

- Vendor support via managed options

#5 — Apache Oozie

Short description: Oozie is a workflow scheduler for managing Hadoop batch jobs.

Key Features

- Workflow orchestration for Hadoop jobs

- Supports MapReduce, Spark, Hive, Pig

- Time and data-based triggers

- Extensible with custom actions

Pros

- Simplifies complex batch workflows

- Well-integrated with Hadoop ecosystem

Cons

- Hadoop-specific

- Limited to orchestration, not processing

Platforms / Deployment

- Linux / On-prem / Hybrid

Security & Compliance

- Kerberos authentication

- Depends on Hadoop deployment

Integrations & Ecosystem

- Hadoop ecosystem: Spark, Hive, Pig, HDFS

Support & Community

- Open-source support

- Documentation available

#6 — Luigi

Short description: Luigi is a Python-based workflow orchestration framework for batch jobs.

Key Features

- Task dependency management

- Workflow visualization

- Supports Hadoop, Spark, and custom tasks

- Retry and failure handling

Pros

- Python-native and easy to script

- Flexible for custom pipelines

Cons

- Not a processing engine

- Limited UI and monitoring

Platforms / Deployment

- Linux / Cloud / On-prem

Security & Compliance

- Depends on deployment

- Supports basic authentication

Integrations & Ecosystem

- Hadoop, Spark, databases, cloud storage

Support & Community

- Open-source community

- Growing adoption

#7 — Airflow

Short description: Apache Airflow is a platform to programmatically author, schedule, and monitor batch workflows.

Key Features

- DAG-based workflow orchestration

- Python API for tasks

- Scheduling, retries, and monitoring

- Supports Kubernetes, Spark, Hive

Pros

- Flexible and highly extensible

- Active community

Cons

- Requires operational management

- Can be complex for beginners

Platforms / Deployment

- Linux / Cloud / On-prem / Hybrid

Security & Compliance

- RBAC, encryption

- Compliance via underlying systems

Integrations & Ecosystem

- Hadoop, Spark, cloud services, databases

Support & Community

- Large open-source community

- Commercial managed options

#8 — Google Cloud Dataflow

Short description: Dataflow provides fully managed batch and streaming processing using Apache Beam.

Key Features

- Serverless execution

- Unified batch and streaming

- Autoscaling compute resources

- Integrates with GCP storage and BigQuery

Pros

- No infrastructure management

- Highly scalable

Cons

- Cloud-only

- Learning Beam APIs required

Platforms / Deployment

- Cloud

Security & Compliance

- IAM, encryption

- Cloud compliance features

Integrations & Ecosystem

- BigQuery, Pub/Sub, cloud storage

Support & Community

- Google Cloud support

- Growing user community

#9 — AWS Glue

Short description: AWS Glue is a serverless ETL service supporting batch processing and workflow orchestration.

Key Features

- Automated ETL scripts

- Serverless batch processing

- Integration with S3, Redshift, RDS

- Job scheduling and monitoring

Pros

- Fully managed, reduces operational burden

- Tight AWS integration

Cons

- Cloud-only

- Limited for non-AWS environments

Platforms / Deployment

- Cloud

Security & Compliance

- IAM, encryption

- SOC 2, compliance via AWS

Integrations & Ecosystem

- AWS ecosystem, BI tools, warehouses

Support & Community

- AWS support

- Active community

#10 — Databricks Batch

Short description: Databricks provides batch processing on a unified Lakehouse platform, supporting large-scale analytics.

Key Features

- Scalable compute clusters

- Python, Scala, SQL, R APIs

- Integration with Delta Lake

- Workflow scheduling

Pros

- Unified analytics and batch processing

- Easy to scale for large datasets

Cons

- Cloud-dependent

- Higher cost for large workloads

Platforms / Deployment

- Cloud

Security & Compliance

- IAM, encryption, RBAC

- SOC 2, compliance via Databricks

Integrations & Ecosystem

- Delta Lake, BI tools, cloud storage

Support & Community

- Enterprise support

- Databricks community

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Apache Hadoop | Distributed batch | Linux | Cloud / On-prem / Hybrid | HDFS + MapReduce | N/A |

| Apache Spark | Unified analytics | Linux | Cloud / On-prem / Hybrid | In-memory batch | N/A |

| Apache Beam | Cross-platform batch | Linux | Cloud / On-prem / Hybrid | Unified APIs | N/A |

| Apache Flink | Stream & batch | Linux | Cloud / On-prem / Hybrid | Unified processing | N/A |

| Apache Oozie | Hadoop workflows | Linux | On-prem / Hybrid | Job orchestration | N/A |

| Luigi | Python workflows | Linux | Cloud / On-prem | Task dependencies | N/A |

| Airflow | DAG orchestration | Linux | Cloud / On-prem / Hybrid | Flexible scheduling | N/A |

| Google Cloud Dataflow | Cloud batch/stream | Cloud | Cloud | Serverless Beam | N/A |

| AWS Glue | Cloud ETL | Cloud | Cloud | Managed ETL & batch | N/A |

| Databricks Batch | Lakehouse batch | Cloud | Cloud | Delta Lake + batch | N/A |

Evaluation & Scoring of Batch Processing Frameworks

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total (0–10) |

|---|---|---|---|---|---|---|---|---|

| Hadoop | 9 | 6 | 8 | 7 | 8 | 7 | 6 | 7.6 |

| Spark | 9 | 7 | 8 | 7 | 9 | 7 | 7 | 7.8 |

| Beam | 8 | 7 | 8 | 7 | 8 | 7 | 6 | 7.3 |

| Flink | 8 | 6 | 8 | 7 | 8 | 7 | 6 | 7.2 |

| Oozie | 7 | 6 | 7 | 7 | 7 | 6 | 6 | 6.7 |

| Luigi | 7 | 8 | 7 | 6 | 7 | 6 | 6 | 6.8 |

| Airflow | 8 | 7 | 8 | 7 | 8 | 7 | 6 | 7.2 |

| Dataflow | 8 | 8 | 8 | 8 | 8 | 7 | 7 | 7.6 |

| AWS Glue | 8 | 8 | 8 | 8 | 8 | 7 | 7 | 7.6 |

| Databricks Batch | 9 | 7 | 8 | 8 | 9 | 7 | 7 | 7.9 |

Which Batch Processing Framework Is Right for You?

Solo / Freelancer

Luigi or Airflow provides lightweight, Python-friendly batch orchestration.

SMB

Cloud-managed options like AWS Glue or Google Dataflow reduce operational overhead.

Mid-Market

Apache Spark or Databricks Batch balances speed, scalability, and analytics integration.

Enterprise

Apache Hadoop, Flink, or Beam deliver enterprise-grade fault tolerance, scale, and unified batch/stream processing.

Budget vs Premium

Open-source frameworks reduce licensing cost but increase operational effort. Managed cloud frameworks reduce operations but may have higher recurring costs.

Feature Depth vs Ease of Use

Frameworks like Spark and Beam offer advanced processing capabilities but require expertise. Cloud-managed frameworks simplify adoption.

Integrations & Scalability

Ensure framework connects with warehouses, BI tools, and storage for scalable batch workflows.

Security & Compliance Needs

Select frameworks with access controls, encryption, and compliance features for sensitive data processing.

Frequently Asked Questions (FAQs)

What is a batch processing framework?

A platform to process large datasets in discrete chunks or batches, typically on scheduled intervals.

How is it different from stream processing?

Batch frameworks process data periodically, while stream processing handles data continuously with low latency.

Can small teams use these frameworks?

Yes, cloud-managed options like AWS Glue or Google Dataflow reduce operational complexity for small teams.

Are batch frameworks secure?

Many provide encryption, access control, and integration with compliance standards.

Do they support analytics pipelines?

Yes, they support ETL, data aggregation, and analytics workflows.

Can these frameworks scale?

Open-source frameworks like Hadoop and Spark scale across clusters; cloud-managed options auto-scale.

How long does deployment take?

Managed cloud solutions can be deployed quickly; self-hosted clusters take longer.

Can they process historical and new data?

Yes, batch frameworks excel at processing both historical and accumulated datasets.

Are they suitable for AI/ML?

Yes, batch processing is ideal for training models on large historical datasets.

How do I choose the right framework?

Consider data volume, latency needs, cloud/on-prem deployment, ease of use, and cost.

Conclusion

Batch processing frameworks remain essential for organizations handling large volumes of data where latency is not critical. Small teams can benefit from Python-friendly workflow orchestrators like Luigi and Airflow, while SMBs may prefer cloud-managed options such as AWS Glue or Google Dataflow for reduced operational complexity. Mid-market organizations benefit from Apache Spark or Databricks Batch for fast, scalable, and analytics-ready processing. Enterprises with large datasets and complex pipelines may rely on Apache Hadoop, Flink, or Beam for fault-tolerant, scalable, and unified batch and stream processing. Choosing the right framework involves evaluating throughput, scalability, operational complexity, integrations, and security. Pilot testing with key workflows is recommended before full deployment. Properly selected batch frameworks enable efficient ETL, analytics, and ML workloads, driving informed business decisions across all functional areas.