Introduction

MLOps platforms are specialized tools and frameworks that streamline the deployment, monitoring, and management of machine learning models in production. They provide automated workflows for versioning, testing, continuous integration and delivery (CI/CD), model governance, and monitoring, ensuring that AI solutions remain reliable, scalable, and compliant.

With AI adoption accelerating, MLOps platforms are essential for operationalizing machine learning models, reducing technical debt, and ensuring reproducibility across teams.

Real-world use cases include:

- Deploying predictive models into production environments

- Monitoring model performance and drift

- Automating retraining pipelines and CI/CD for ML

- Managing feature stores and datasets

- Ensuring compliance, auditability, and reproducibility

Key evaluation criteria for buyers:

- Model deployment and monitoring capabilities

- CI/CD and workflow automation for ML

- Versioning and reproducibility

- Integration with ML frameworks (TensorFlow, PyTorch)

- Collaboration for data science and engineering teams

- Scalability and distributed infrastructure support

- Feature store and data management support

- Security, governance, and compliance

- Ease of use and operational transparency

- Cloud, on-prem, or hybrid deployment flexibility

Best for:

MLOps platforms are ideal for ML engineers, data scientists, AI teams, and DevOps teams managing production-grade machine learning pipelines.

Not ideal for:

Organizations running only experimentation or small-scale models without production deployment may not require a full MLOps platform.

Key Trends in MLOps Platforms

- End-to-end model lifecycle management from development to production

- Automated retraining pipelines based on model drift detection

- Integration with CI/CD systems for continuous deployment

- Cloud-native platforms with scalable compute and storage

- Support for multiple ML frameworks and languages

- Feature store management for reproducibility

- Monitoring and alerting for model performance

- Governance, auditability, and compliance

- Collaboration tools for data science and engineering teams

- Low-code or no-code deployment options

How We Selected These Tools (Methodology)

- Evaluated model deployment and lifecycle management capabilities

- Assessed CI/CD integration and pipeline automation

- Reviewed monitoring, logging, and drift detection features

- Checked integration with ML frameworks and data sources

- Considered scalability for distributed deployments

- Examined feature store and dataset management

- Evaluated team collaboration and workflow automation

- Reviewed security, governance, and compliance features

- Assessed ease of use and operational transparency

- Ensured suitability across freelancers, SMBs, mid-market, and enterprises

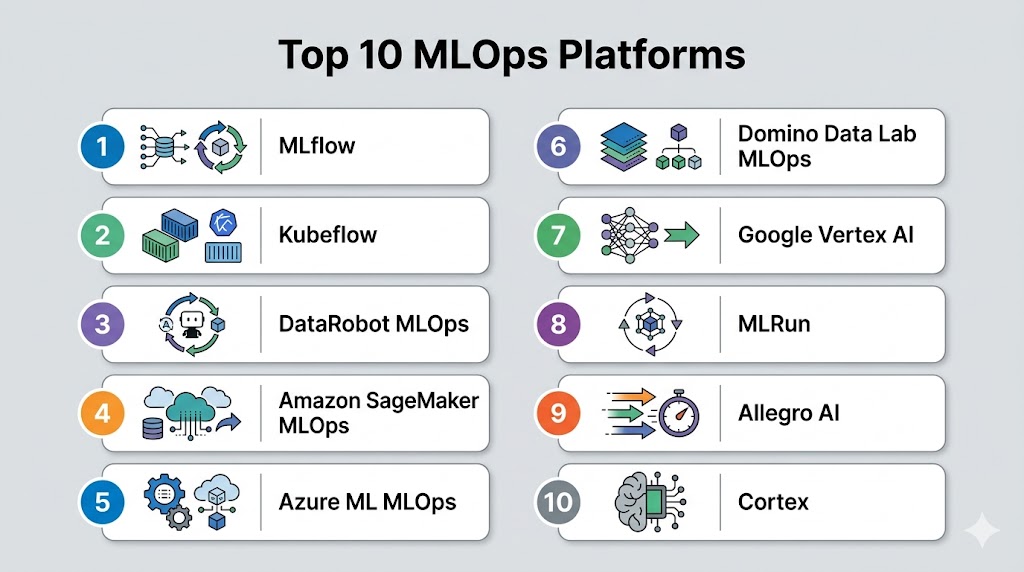

Top 10 MLOps Platforms

#1 — MLflow

Short description: MLflow is an open-source MLOps platform for experiment tracking, model packaging, and deployment.

Key Features

- Experiment tracking and reproducibility

- Model packaging for multiple ML frameworks

- Deployment to REST APIs or cloud services

- MLflow Projects for pipeline automation

- Model registry with version control

Pros

- Open-source and flexible

- Supports multiple ML frameworks

Cons

- Requires infrastructure setup

- Limited advanced monitoring features

Platforms / Deployment

- Linux / Windows / macOS / Cloud

- Cloud / On-prem / Hybrid

Security & Compliance

- Depends on deployment

- Supports authentication and access controls

Integrations & Ecosystem

- TensorFlow, PyTorch, Scikit-learn, cloud platforms

Support & Community

- Large open-source community

- Extensive documentation

#2 — Kubeflow

Short description: Kubeflow is an open-source platform for deploying, orchestrating, and managing ML workflows on Kubernetes.

Key Features

- Kubernetes-native deployment

- Pipelines for CI/CD automation

- Experiment tracking and versioning

- Distributed training support

- Integration with cloud and on-prem infrastructure

Pros

- Scalable and production-ready

- Strong integration with cloud-native ecosystems

Cons

- Complex setup

- Requires Kubernetes expertise

Platforms / Deployment

- Linux / Cloud / On-prem / Hybrid

Security & Compliance

- RBAC, encryption, deployment-dependent

- Supports enterprise security policies

Integrations & Ecosystem

- TensorFlow, PyTorch, MLflow, cloud storage

Support & Community

- Open-source community

- Active forums and documentation

#3 — DataRobot MLOps

Short description: DataRobot MLOps is a commercial platform for model deployment, monitoring, and lifecycle management.

Key Features

- Automated deployment pipelines

- Model monitoring and drift detection

- Governance and audit trails

- Integration with cloud and on-prem ML workflows

- Collaboration tools for teams

Pros

- Enterprise-ready

- Comprehensive governance and monitoring

Cons

- High licensing cost

- Cloud dependency for some features

Platforms / Deployment

- Cloud / On-prem / Hybrid

Security & Compliance

- Encryption, RBAC, SOC 2, GDPR

Integrations & Ecosystem

- DataRobot models, cloud storage, BI tools

Support & Community

- Enterprise support

- Knowledge base and community

#4 — Amazon SageMaker MLOps

Short description: SageMaker provides integrated MLOps capabilities for deployment, monitoring, and automation on AWS.

Key Features

- Model deployment and endpoint management

- Automated CI/CD pipelines

- Model monitoring and drift detection

- Integration with SageMaker Studio and cloud services

- Feature store support

Pros

- Fully managed and scalable

- Tight integration with AWS ecosystem

Cons

- Cloud-only

- Cost scales with usage

Platforms / Deployment

- Cloud

Security & Compliance

- IAM, encryption, SOC 2, GDPR

Integrations & Ecosystem

- AWS services, ML frameworks, BI tools

Support & Community

- AWS support

- Active community

#5 — Azure Machine Learning MLOps

Short description: Azure ML MLOps provides cloud-based pipelines, monitoring, and deployment for enterprise ML workflows.

Key Features

- Automated ML pipelines and CI/CD

- Model versioning and governance

- Integration with Azure DevOps

- Endpoint deployment and monitoring

- Feature store and dataset management

Pros

- Enterprise-grade MLOps

- Cloud-native with Azure ecosystem

Cons

- Cloud-only

- Learning curve for non-Azure users

Platforms / Deployment

- Cloud

Security & Compliance

- SSO, RBAC, encryption, SOC 2, GDPR

Integrations & Ecosystem

- Azure ML, Data Lake, BI tools

Support & Community

- Microsoft enterprise support

- Community forums

#6 — Domino Data Lab MLOps

Short description: Domino MLOps provides deployment, versioning, and monitoring tools for production ML pipelines.

Key Features

- Model versioning and reproducibility

- Deployment to cloud or on-prem endpoints

- CI/CD integration for ML pipelines

- Experiment tracking and collaboration

- Scalable compute clusters

Pros

- Strong collaboration features

- Supports reproducibility and governance

Cons

- Enterprise pricing

- On-prem setup requires expertise

Platforms / Deployment

- Cloud / On-prem / Hybrid

Security & Compliance

- RBAC, encryption, audit logs, SOC 2, GDPR

Integrations & Ecosystem

- TensorFlow, PyTorch, cloud storage, BI tools

Support & Community

- Enterprise support

- Active community

#7 — Google Vertex AI

Short description: Vertex AI provides integrated MLOps tools for deployment, monitoring, and pipeline automation on GCP.

Key Features

- Model deployment and monitoring

- MLOps pipelines and versioning

- Integration with GCP services

- Pre-trained models and AutoML support

- Scalable cloud infrastructure

Pros

- Fully managed and cloud-native

- Multi-data type support

Cons

- Cloud-only

- GCP vendor lock-in

Platforms / Deployment

- Cloud

Security & Compliance

- IAM, encryption, SOC 2, GDPR

Integrations & Ecosystem

- BigQuery, TensorFlow, cloud storage

Support & Community

- Google Cloud support

- Active community

#8 — MLRun

Short description: MLRun is an open-source MLOps framework for automating deployment and management of ML pipelines.

Key Features

- CI/CD pipelines for ML

- Experiment tracking and logging

- Deployment to Kubernetes or cloud endpoints

- Integration with data and feature stores

- Distributed training support

Pros

- Open-source and flexible

- Supports cloud-native and on-prem deployments

Cons

- Requires Kubernetes knowledge

- Smaller community than commercial platforms

Platforms / Deployment

- Linux / Cloud / On-prem / Hybrid

Security & Compliance

- Deployment-dependent

- Supports encryption and access control

Integrations & Ecosystem

- TensorFlow, PyTorch, MLflow, cloud storage

Support & Community

- Open-source community

- Documentation available

#9 — Allegro AI

Short description: Allegro AI provides MLOps tools focusing on model deployment, versioning, and lifecycle management for AI workflows.

Key Features

- Model registry and versioning

- Deployment pipelines and monitoring

- Experiment tracking and collaboration

- Integration with cloud or on-prem infrastructure

- Supports ML frameworks including TensorFlow and PyTorch

Pros

- Enterprise-ready AI lifecycle management

- Strong focus on reproducibility

Cons

- Licensing cost

- Cloud/on-prem setup complexity

Platforms / Deployment

- Cloud / On-prem / Hybrid

Security & Compliance

- Encryption, RBAC, SOC 2, GDPR

Integrations & Ecosystem

- TensorFlow, PyTorch, cloud storage, BI tools

Support & Community

- Enterprise support

- Knowledge base

#10 — Cortex

Short description: Cortex is an open-source MLOps platform for deploying and managing ML models on Kubernetes.

Key Features

- Model deployment via APIs

- Monitoring and logging

- Kubernetes-native scaling

- Supports TensorFlow, PyTorch, and custom models

- CI/CD integration

Pros

- Open-source and cloud-native

- Scales with Kubernetes clusters

Cons

- Requires Kubernetes expertise

- Smaller community

Platforms / Deployment

- Linux / Cloud / On-prem / Hybrid

Security & Compliance

- Deployment-dependent

- Supports RBAC and encryption

Integrations & Ecosystem

- TensorFlow, PyTorch, Kubernetes, cloud storage

Support & Community

- Open-source community

- Documentation available

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| MLflow | Experiment tracking | Linux / Windows / macOS / Cloud | Cloud / On-prem / Hybrid | Model registry & pipelines | N/A |

| Kubeflow | Kubernetes-native ML | Linux / Cloud / On-prem / Hybrid | Cloud / On-prem / Hybrid | Kubernetes pipelines | N/A |

| DataRobot MLOps | Enterprise AI | Cloud / On-prem / Hybrid | Cloud / On-prem / Hybrid | Automated monitoring & governance | N/A |

| Amazon SageMaker MLOps | Cloud ML | Cloud | Cloud | Managed deployment & monitoring | N/A |

| Azure ML MLOps | Cloud ML | Cloud | Cloud | CI/CD pipelines & feature store | N/A |

| Domino Data Lab MLOps | Collaboration | Cloud / On-prem / Hybrid | Cloud / On-prem / Hybrid | Experiment tracking & reproducibility | N/A |

| Google Vertex AI | Cloud ML | Cloud | Cloud | Managed MLOps pipelines | N/A |

| MLRun | Open-source MLOps | Linux / Cloud / On-prem / Hybrid | Cloud / On-prem / Hybrid | Automation & Kubernetes-native | N/A |

| Allegro AI | Enterprise AI | Cloud / On-prem / Hybrid | Cloud / On-prem / Hybrid | Model registry & monitoring | N/A |

| Cortex | Open-source MLOps | Linux / Cloud / On-prem / Hybrid | Cloud / On-prem / Hybrid | Kubernetes deployment & scaling | N/A |

Evaluation & Scoring of MLOps Platforms

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total (0–10) |

|---|---|---|---|---|---|---|---|---|

| MLflow | 8 | 7 | 8 | 7 | 8 | 7 | 7 | 7.6 |

| Kubeflow | 8 | 6 | 8 | 7 | 8 | 7 | 7 | 7.3 |

| DataRobot MLOps | 9 | 8 | 8 | 8 | 9 | 8 | 7 | 8.3 |

| Amazon SageMaker MLOps | 8 | 8 | 8 | 8 | 8 | 7 | 7 | 7.8 |

| Azure ML MLOps | 8 | 8 | 8 | 8 | 8 | 7 | 7 | 7.8 |

| Domino Data Lab MLOps | 8 | 7 | 7 | 8 | 8 | 7 | 7 | 7.6 |

| Google Vertex AI | 8 | 8 | 8 | 8 | 8 | 7 | 7 | 7.8 |

| MLRun | 8 | 7 | 7 | 7 | 8 | 7 | 7 | 7.4 |

| Allegro AI | 8 | 7 | 7 | 8 | 8 | 7 | 7 | 7.6 |

| Cortex | 7 | 7 | 7 | 7 | 7 | 6 | 7 | 7.0 |

Which MLOps Platform Is Right for You?

Solo / Freelancer

MLflow or MLRun provides lightweight, open-source MLOps for small-scale projects and experimentation.

SMB

Google Vertex AI, Azure ML MLOps, or Amazon SageMaker MLOps offers cloud-managed pipelines with low operational overhead.

Mid-Market

DataRobot MLOps or Domino Data Lab MLOps supports collaboration, reproducibility, and scalable deployment.

Enterprise

Kubeflow, Allegro AI, or Cortex provides Kubernetes-native, production-grade MLOps for large-scale AI operations.

Budget vs Premium

Open-source tools reduce licensing costs but require technical expertise. Cloud-managed and enterprise platforms provide full feature sets with governance at higher costs.

Feature Depth vs Ease of Use

Low-code cloud platforms like Vertex AI or SageMaker simplify operations, while Kubeflow and MLRun provide advanced customization for engineers.

Integrations & Scalability

Choose platforms that integrate with ML frameworks, CI/CD tools, feature stores, and cloud/on-prem compute clusters.

Security & Compliance Needs

For enterprise usage, select platforms with RBAC, encryption, audit logs, and regulatory compliance capabilities.

Frequently Asked Questions (FAQs)

What is an MLOps platform?

A platform that manages the deployment, monitoring, and lifecycle of machine learning models in production.

Can non-technical teams use MLOps?

Some cloud-managed platforms offer low-code interfaces, but technical expertise is recommended for complex pipelines.

Are MLOps platforms cloud-only?

Many are cloud-native, but some like Kubeflow, MLRun, and Domino support on-prem or hybrid deployment.

Do these platforms support multiple ML frameworks?

Yes, platforms generally support TensorFlow, PyTorch, Scikit-learn, and others.

Can models be monitored after deployment?

Yes, MLOps platforms provide model monitoring, drift detection, and alerting.

Do these platforms handle distributed training?

Cloud-managed and Kubernetes-native platforms support distributed deployments for scalability.

Are they secure and compliant?

Enterprise MLOps platforms provide RBAC, encryption, and audit logs to meet regulatory standards.

How do MLOps platforms integrate with data pipelines?

They connect with cloud storage, feature stores, CI/CD pipelines, and orchestration tools.

Can AutoML models be deployed with MLOps?

Yes, most platforms support AutoML and traditional ML model deployment pipelines.

How to choose the right MLOps platform?

Consider team size, cloud/on-prem preference, deployment scale, integrations, and regulatory compliance.

Conclusion

MLOps platforms streamline the production, deployment, monitoring, and governance of machine learning models, ensuring reproducibility, scalability, and reliability. Freelancers and small teams can leverage MLflow or MLRun for lightweight, open-source operations. SMBs benefit from cloud-managed solutions such as Vertex AI, SageMaker MLOps, or Azure ML MLOps for low-code automation. Mid-market organizations can adopt DataRobot or Domino Data Lab MLOps for collaboration and reproducibility at scale. Enterprises requiring production-grade, Kubernetes-native MLOps may rely on Kubeflow, Allegro AI, or Cortex for end-to-end model lifecycle management. Selecting the right platform involves evaluating scalability, integrations, ease of use, and governance. Pilot testing with critical models ensures the platform meets both technical and business requirements, enabling efficient and reliable AI operations.