Introduction

RAG (Retrieval-Augmented Generation) Tooling refers to platforms and frameworks that combine information retrieval systems with generative AI models to produce more accurate, context-aware, and grounded responses. Instead of relying solely on pre-trained knowledge, RAG systems fetch relevant data from external sources—such as documents, databases, or APIs—and use it to enhance AI-generated outputs.

With the rise of enterprise AI applications, RAG has become essential for reducing hallucinations and ensuring factual accuracy. Organizations are increasingly adopting RAG pipelines to power chatbots, knowledge assistants, search systems, and internal AI tools that rely on up-to-date and proprietary data.

Common use cases include:

- Enterprise knowledge assistants

- AI-powered search systems

- Customer support automation

- Document question answering

- Internal data retrieval and insights

Key evaluation criteria:

- Retrieval accuracy and indexing capabilities

- Integration with vector databases

- LLM compatibility and flexibility

- Scalability and performance

- Data security and access controls

- Ease of pipeline orchestration

- Support for structured and unstructured data

- Real-time query processing

Best for: AI engineers, data teams, enterprises building LLM-powered applications, and organizations managing large document repositories.

Not ideal for: Small projects with static data or applications that do not require dynamic knowledge retrieval.

Key Trends in RAG (Retrieval-Augmented Generation) Tooling for 2026 and Beyond

- Hybrid search combining vector and keyword retrieval

- Real-time indexing and streaming data pipelines

- AI-powered document chunking and embedding optimization

- Multi-modal RAG supporting text, images, and audio

- Built-in evaluation and feedback loops

- Integration with AI agents and autonomous workflows

- Privacy-preserving retrieval techniques

- Fine-tuned embeddings for domain-specific use cases

- Serverless and managed RAG platforms

- End-to-end orchestration with observability

How We Selected These Tools (Methodology)

- Evaluated adoption across AI and developer communities

- Assessed retrieval and generation integration capabilities

- Compared support for vector databases and embeddings

- Reviewed scalability and production readiness

- Analyzed integration with modern AI stacks

- Considered ease of use and developer experience

- Included both open-source and enterprise solutions

- Focused on real-world deployment scenarios

- Balanced flexibility with performance

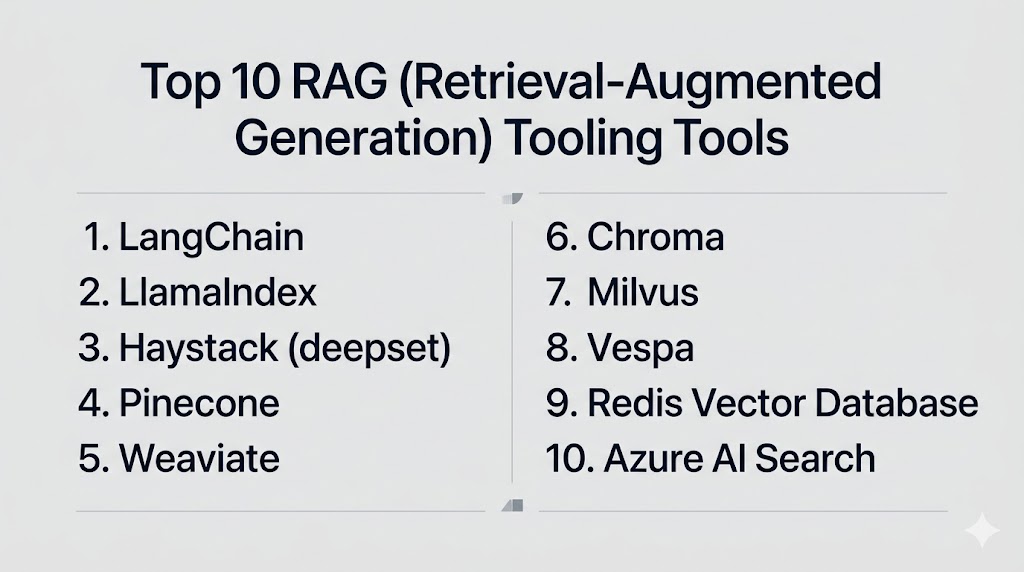

Top 10 RAG (Retrieval-Augmented Generation) Tooling Tools

#1 — LangChain

Short description:

LangChain is one of the most widely used frameworks for building RAG applications, enabling developers to connect LLMs with external data sources and create complex AI workflows.

Key Features

- Chain-based orchestration

- Document loaders and retrievers

- Memory and context handling

- Integration with vector databases

- Agent-based workflows

Pros

- Highly flexible and extensible

- Large developer community

Cons

- Steep learning curve

- Rapid changes can cause instability

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Varies / N/A

Integrations & Ecosystem

LangChain integrates with a wide range of AI tools, vector databases, and APIs, making it a central hub for RAG development.

- OpenAI

- Pinecone

- Weaviate

- FAISS

Support & Community

Extremely active community with extensive documentation.

#2 — LlamaIndex

Short description:

LlamaIndex is designed specifically for building RAG pipelines by connecting LLMs with structured and unstructured data sources.

Key Features

- Data connectors

- Indexing and retrieval

- Query engines

- Embedding optimization

- Document parsing

Pros

- Purpose-built for RAG

- Easy data integration

Cons

- Limited beyond RAG use cases

- Requires technical setup

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Varies / N/A

Integrations & Ecosystem

LlamaIndex integrates with data sources and vector stores to enable efficient retrieval.

- APIs

- Vector databases

- File systems

Support & Community

Growing community with strong developer support.

#3 — Haystack (deepset)

Short description:

Haystack is an open-source framework for building search systems and RAG pipelines with strong support for NLP workflows.

Key Features

- Document indexing

- Retrieval pipelines

- Multi-model support

- Evaluation tools

- REST APIs

Pros

- Production-ready

- Flexible architecture

Cons

- Setup complexity

- Requires infrastructure management

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Varies / N/A

Integrations & Ecosystem

Supports integration with search systems and databases for scalable retrieval.

- Elasticsearch

- OpenSearch

- FAISS

Support & Community

Active open-source community.

#4 — Pinecone

Short description:

Pinecone is a managed vector database optimized for similarity search, making it a core component of many RAG systems.

Key Features

- Vector search

- Real-time indexing

- Scalability

- Low-latency queries

- Managed infrastructure

Pros

- High performance

- Fully managed service

Cons

- Cost considerations

- Vendor lock-in

Platforms / Deployment

Cloud

Security & Compliance

Encryption, access controls

Integrations & Ecosystem

Pinecone integrates with major AI frameworks and tools to enable fast vector search.

- LangChain

- LlamaIndex

- OpenAI

Support & Community

Strong documentation and enterprise support.

#5 — Weaviate

Short description:

Weaviate is an open-source vector database with built-in AI capabilities for semantic search and RAG applications.

Key Features

- Vector search

- Graph-based relationships

- Hybrid search

- Schema management

- API-first design

Pros

- Open-source flexibility

- Built-in ML capabilities

Cons

- Requires setup

- Scaling complexity

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

RBAC, encryption

Integrations & Ecosystem

Weaviate integrates with AI frameworks and supports custom extensions.

- APIs

- ML tools

- Vector pipelines

Support & Community

Active community and documentation.

#6 — Chroma

Short description:

Chroma is a lightweight vector database designed for embedding storage and retrieval in RAG applications.

Key Features

- Embedding storage

- Fast retrieval

- Simple API

- Lightweight design

- Local deployment

Pros

- Easy to use

- Developer-friendly

Cons

- Limited enterprise features

- Scaling limitations

Platforms / Deployment

Self-hosted

Security & Compliance

Varies / N/A

Integrations & Ecosystem

Chroma integrates easily with RAG frameworks and local workflows.

- LangChain

- Python

Support & Community

Growing developer community.

#7 — Milvus

Short description:

Milvus is a high-performance open-source vector database designed for large-scale similarity search in AI applications.

Key Features

- Distributed architecture

- High scalability

- Vector indexing

- Real-time search

- Multi-cloud support

Pros

- Highly scalable

- Open-source

Cons

- Complex setup

- Requires infrastructure

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

RBAC, encryption

Integrations & Ecosystem

Milvus integrates with AI pipelines and vector search ecosystems.

- Kubernetes

- AI frameworks

- APIs

Support & Community

Strong open-source community.

#8 — Vespa

Short description:

Vespa is a real-time data serving platform designed for search, recommendation, and RAG systems at scale.

Key Features

- Real-time indexing

- Hybrid search

- Ranking models

- Distributed system

- Low latency

Pros

- High performance

- Scalable architecture

Cons

- Complex deployment

- Learning curve

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Supports integration with large-scale data systems and search pipelines.

- APIs

- Data pipelines

Support & Community

Active community with enterprise backing.

#9 — Redis Vector Database

Short description:

Redis offers vector search capabilities as part of its in-memory database, enabling fast retrieval for RAG applications.

Key Features

- In-memory vector search

- Low latency

- Real-time updates

- Scalable architecture

- Multi-purpose database

Pros

- Extremely fast

- Versatile

Cons

- Memory cost

- Requires tuning

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

RBAC, encryption

Integrations & Ecosystem

Redis integrates with many applications and frameworks for real-time data retrieval.

- APIs

- Cloud platforms

- AI frameworks

Support & Community

Large global community.

#10 — Azure AI Search

Short description:

Azure AI Search provides enterprise-grade search and indexing capabilities integrated with Microsoft’s AI ecosystem for RAG applications.

Key Features

- Cognitive search

- Indexing pipelines

- AI enrichment

- Hybrid search

- Scalability

Pros

- Enterprise-ready

- Strong Azure integration

Cons

- Azure dependency

- Pricing complexity

Platforms / Deployment

Cloud

Security & Compliance

MFA, RBAC, encryption

Integrations & Ecosystem

Deep integration with Microsoft services and enterprise systems.

- Azure

- Power BI

- APIs

Support & Community

Enterprise-level support from Microsoft.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| LangChain | RAG orchestration | Python/Web | Cloud/Self | Workflow chaining | N/A |

| LlamaIndex | Data indexing | Python | Cloud/Self | RAG-focused indexing | N/A |

| Haystack | Search pipelines | Web/API | Cloud/Self | NLP pipelines | N/A |

| Pinecone | Vector search | Web | Cloud | Managed DB | N/A |

| Weaviate | Semantic search | Web/API | Cloud/Self | Hybrid search | N/A |

| Chroma | Lightweight RAG | Python | Self-hosted | Simple embeddings | N/A |

| Milvus | Large-scale search | Web/API | Cloud/Self | Distributed system | N/A |

| Vespa | Real-time search | Web/API | Cloud/Self | Low latency | N/A |

| Redis Vector | Fast retrieval | Multi-platform | Cloud/Self | In-memory search | N/A |

| Azure AI Search | Enterprise search | Web | Cloud | AI enrichment | N/A |

Evaluation & Scoring of RAG (Retrieval-Augmented Generation) Tooling

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total (0–10) |

|---|---|---|---|---|---|---|---|---|

| LangChain | 9 | 7 | 9 | 6 | 8 | 9 | 8 | 8.2 |

| LlamaIndex | 9 | 8 | 8 | 6 | 8 | 8 | 8 | 8.1 |

| Haystack | 8 | 7 | 8 | 6 | 8 | 7 | 8 | 7.8 |

| Pinecone | 9 | 8 | 9 | 8 | 9 | 8 | 7 | 8.5 |

| Weaviate | 8 | 7 | 8 | 7 | 8 | 7 | 8 | 7.9 |

| Chroma | 7 | 9 | 7 | 5 | 7 | 6 | 9 | 7.5 |

| Milvus | 9 | 6 | 8 | 7 | 9 | 7 | 8 | 8.1 |

| Vespa | 8 | 6 | 7 | 6 | 9 | 7 | 7 | 7.6 |

| Redis Vector | 8 | 8 | 8 | 7 | 9 | 8 | 7 | 8.2 |

| Azure AI Search | 9 | 7 | 9 | 9 | 9 | 9 | 7 | 8.6 |

How to interpret scores:

These scores represent a comparative view across performance, usability, and ecosystem strength. Higher scores indicate tools that are more balanced and production-ready, while others may excel in specific niches like lightweight development or large-scale enterprise deployments.

Which RAG (Retrieval-Augmented Generation) Tooling Tool Is Right for You?

Solo / Freelancer

Use Chroma or LlamaIndex for simple and lightweight RAG applications without heavy infrastructure.

SMB

LangChain combined with Pinecone offers a scalable and manageable setup for growing teams.

Mid-Market

Weaviate or Redis Vector provide a balance of performance and flexibility.

Enterprise

Azure AI Search, Pinecone, and Milvus are ideal for large-scale, production-ready deployments.

Budget vs Premium

- Budget: Chroma, Haystack

- Premium: Pinecone, Azure AI Search

Feature Depth vs Ease of Use

- Deep features: LangChain, Milvus

- Easy to use: Chroma, LlamaIndex

Integrations & Scalability

Choose tools with strong API ecosystems and compatibility with your AI stack.

Security & Compliance Needs

Enterprises should prioritize tools with strong access controls and compliance support.

Frequently Asked Questions (FAQs)

What is RAG in AI?

RAG combines retrieval systems with generative models to produce more accurate and context-aware responses by using external data sources.

Why is RAG important?

It reduces hallucinations and ensures AI outputs are grounded in real data, improving reliability.

Do I need a vector database for RAG?

Yes, most RAG systems rely on vector databases for efficient similarity search.

Can RAG work with any LLM?

Most modern LLMs can be integrated with RAG pipelines using APIs and frameworks.

Is RAG suitable for real-time applications?

Yes, many tools support low-latency retrieval for real-time use cases.

How secure are RAG systems?

Security depends on implementation, but enterprise tools provide encryption and access controls.

What industries use RAG?

Finance, healthcare, e-commerce, and SaaS companies widely use RAG systems.

Is RAG expensive to implement?

Costs vary depending on infrastructure and tools used.

Can I build RAG without coding?

Some platforms offer low-code solutions, but most require development effort.

How do I choose the right RAG tool?

Focus on scalability, integration, and your specific use case requirements.

Conclusion

RAG (Retrieval-Augmented Generation) tooling has quickly become a foundational layer for building reliable and context-aware AI applications. As organizations move beyond basic generative AI use cases, the need for accurate, up-to-date, and grounded responses is driving the adoption of RAG architectures. These tools help bridge the gap between static model knowledge and dynamic real-world data, significantly improving output quality. The ecosystem offers a wide range of solutions—from lightweight frameworks like LlamaIndex and Chroma to enterprise-grade platforms like Azure AI Search and Pinecone—each designed for different scales and complexity levels. There is no single best tool, as the right choice depends on your data architecture, team expertise, and performance requirements. Teams should carefully evaluate factors like retrieval accuracy, scalability, integration capabilities, and security before making a decision. A practical approach is to start with a small prototype, test retrieval performance, and gradually scale based on real-world usage. As RAG systems evolve, combining them with observability and evaluation tools will become increasingly important for maintaining quality. Investing in the right RAG tooling early can significantly accelerate AI adoption while reducing risks associated with hallucinations and outdated information. Start by shortlisting two or three tools that align with your stack, run pilot implementations, and validate resultsbefore full-scale deployment.