Introduction

LLM Orchestration Frameworks are platforms and libraries designed to coordinate, manage, and integrate large language models (LLMs) into real-world applications. These frameworks enable developers to connect models with data sources, APIs, memory systems, and workflows, allowing AI systems to perform complex, multi-step tasks.

As organizations increasingly adopt generative AI, orchestration frameworks have become essential for building scalable, production-ready applications. They simplify how LLMs interact with external tools, manage context, and execute workflows, making them a critical layer in modern AI architectures.

Real-world use cases include:

- Building AI-powered chatbots and assistants

- Creating Retrieval-Augmented Generation (RAG) pipelines

- Automating workflows using LLMs and APIs

- Multi-agent AI systems

- Knowledge management and enterprise search

Key evaluation criteria for buyers:

- Workflow orchestration capabilities

- Integration with APIs, databases, and tools

- Support for RAG and memory systems

- Multi-agent support

- Scalability and performance

- Security and governance

- Developer experience and flexibility

- Monitoring and observability

- Deployment flexibility (cloud/on-prem/hybrid)

- Community and ecosystem

Best for:

LLM orchestration frameworks are ideal for AI engineers, developers, startups, and enterprises building advanced AI applications.

Not ideal for:

Simple applications that only require direct LLM API calls without orchestration.

Key Trends in LLM Orchestration Frameworks

- Rise of RAG-based architectures

- Multi-agent orchestration systems

- Integration with vector databases and knowledge bases

- Low-code and no-code orchestration tools

- Real-time LLM pipelines and workflows

- Observability and monitoring tools for LLMs

- Hybrid deployment (cloud + on-prem)

- Composable AI architectures

- Integration with enterprise systems and APIs

- Focus on scalability and production readiness

How We Selected These Tools (Methodology)

- Evaluated orchestration and workflow capabilities

- Assessed integration with LLMs, APIs, and databases

- Reviewed support for RAG and memory systems

- Checked multi-agent capabilities

- Considered developer experience and flexibility

- Examined scalability and performance

- Evaluated security and governance features

- Reviewed community adoption and ecosystem

- Considered open-source vs managed frameworks

- Ensured applicability across SMB to enterprise environments

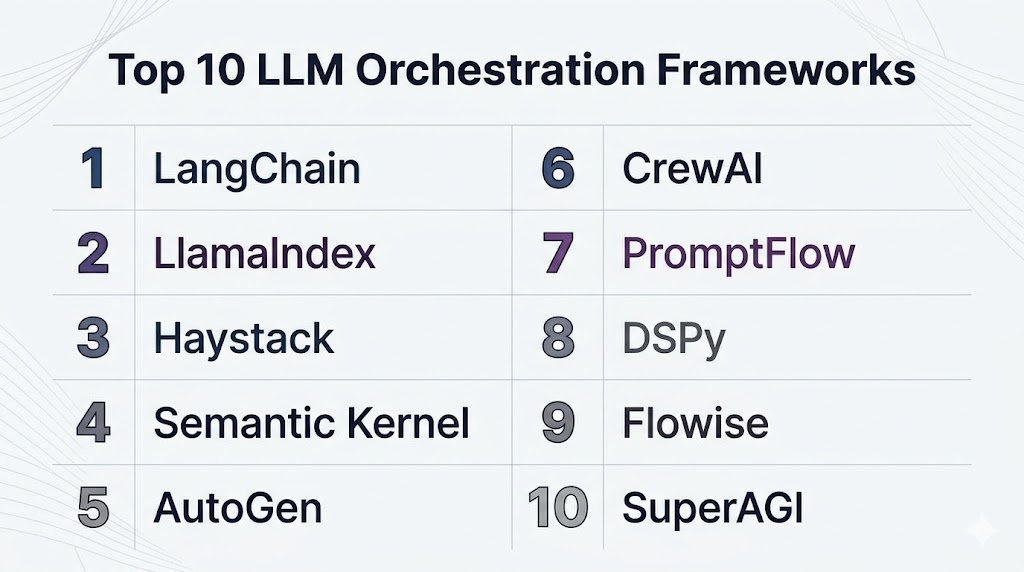

Top 10 LLM Orchestration Frameworks

#1 — LangChain

Short description (3-4 lines): LangChain is one of the most widely used frameworks for building LLM-powered applications, enabling integration with tools, APIs, and data sources.

Key Features

- Workflow orchestration

- Tool and API integration

- Memory and context handling

- RAG support

- Multi-agent systems

- Extensive ecosystem

Pros

- Highly flexible

- Large community

Cons

- Complex setup

- Rapid changes

Platforms / Deployment

- Cloud / On-prem

Security & Compliance

- Depends on deployment

Integrations & Ecosystem

- APIs, databases, vector stores

Support & Community

- Large community

#2 — LlamaIndex

Short description: LlamaIndex focuses on connecting LLMs with data sources and building RAG pipelines efficiently.

Key Features

- Data indexing

- RAG pipelines

- Query engines

- Multi-source integration

- Context management

Pros

- Strong data integration

- Easy RAG setup

Cons

- Limited orchestration depth

- Learning curve

Platforms / Deployment

- Cloud / Local

Security & Compliance

- Depends on deployment

Integrations & Ecosystem

- Databases, APIs

Support & Community

- Active community

#3 — Haystack

Short description: Haystack is an open-source framework for building search and question-answering systems using LLMs.

Key Features

- RAG pipelines

- Document search

- Question answering

- Pipeline orchestration

- Integration with vector databases

Pros

- Strong search capabilities

- Production-ready

Cons

- Setup complexity

- Resource-heavy

Platforms / Deployment

- Cloud / On-prem

Security & Compliance

- Depends on deployment

Integrations & Ecosystem

- Search engines, databases

Support & Community

- Active community

#4 — Semantic Kernel

Short description: Semantic Kernel is a framework designed to integrate LLMs into applications with structured workflows.

Key Features

- Plugin-based architecture

- Workflow orchestration

- LLM integration

- Memory management

- API integration

Pros

- Structured approach

- Enterprise-ready

Cons

- Learning curve

- Limited ecosystem

Platforms / Deployment

- Cloud / Hybrid

Security & Compliance

- Enterprise controls

Integrations & Ecosystem

- Microsoft ecosystem

Support & Community

- Growing community

#5 — AutoGen

Short description: AutoGen enables multi-agent orchestration for collaborative AI workflows.

Key Features

- Multi-agent systems

- Workflow automation

- LLM integration

- Task delegation

- Flexible architecture

Pros

- Strong multi-agent support

- Flexible

Cons

- Developer-focused

- Complexity

Platforms / Deployment

- Cloud / Local

Security & Compliance

- Depends on deployment

Integrations & Ecosystem

- APIs

Support & Community

- Growing community

#6 — CrewAI

Short description: CrewAI focuses on coordinating teams of AI agents working together on tasks.

Key Features

- Agent orchestration

- Task delegation

- Workflow automation

- Role-based agents

- Lightweight framework

Pros

- Easy collaboration

- Simple setup

Cons

- Limited enterprise features

- Smaller ecosystem

Platforms / Deployment

- Local / Cloud

Security & Compliance

- Depends on deployment

Integrations & Ecosystem

- APIs

Support & Community

- Active community

#7 — PromptFlow

Short description: PromptFlow is a workflow tool for building, testing, and deploying LLM applications.

Key Features

- Workflow design

- Prompt management

- Testing tools

- Deployment pipelines

- Monitoring

Pros

- Developer-friendly

- Structured workflows

Cons

- Limited flexibility

- Learning curve

Platforms / Deployment

- Cloud

Security & Compliance

- Enterprise controls

Integrations & Ecosystem

- Azure ecosystem

Support & Community

- Microsoft support

#8 — DSPy

Short description: DSPy is a programming framework for optimizing LLM pipelines through declarative programming.

Key Features

- Declarative programming

- LLM optimization

- Pipeline orchestration

- Model tuning

- Experimentation tools

Pros

- Advanced optimization

- Research-friendly

Cons

- Complex

- Niche use

Platforms / Deployment

- Local / Cloud

Security & Compliance

- Depends on deployment

Integrations & Ecosystem

- ML tools

Support & Community

- Academic community

#9 — Flowise

Short description: Flowise is a low-code LLM orchestration tool with visual workflow building.

Key Features

- Drag-and-drop workflows

- LLM integration

- RAG support

- API connections

- Visual interface

Pros

- Easy to use

- No-code friendly

Cons

- Limited scalability

- Fewer advanced features

Platforms / Deployment

- Cloud / Local

Security & Compliance

- Standard controls

Integrations & Ecosystem

- APIs

Support & Community

- Growing community

#10 — SuperAGI

Short description: SuperAGI is a platform for building autonomous AI agents and orchestration workflows.

Key Features

- Agent orchestration

- Workflow automation

- LLM integration

- Monitoring tools

- API connectivity

Pros

- Full automation

- Modern platform

Cons

- New ecosystem

- Complexity

Platforms / Deployment

- Cloud

Security & Compliance

- Standard controls

Integrations & Ecosystem

- APIs

Support & Community

- Growing community

Comparison Table

| Tool | Best For | Platform | Deployment | Standout Feature | Rating |

|---|---|---|---|---|---|

| LangChain | Developers | Multi | Hybrid | Flexibility | N/A |

| LlamaIndex | RAG | Multi | Hybrid | Data integration | N/A |

| Haystack | Search | Multi | Hybrid | QA pipelines | N/A |

| Semantic Kernel | Enterprise | Multi | Hybrid | Structured workflows | N/A |

| AutoGen | Multi-agent | Multi | Hybrid | Collaboration | N/A |

| CrewAI | Lightweight | Multi | Hybrid | Simplicity | N/A |

| PromptFlow | Workflows | Cloud | Cloud | Prompt pipelines | N/A |

| DSPy | Optimization | Multi | Hybrid | Declarative AI | N/A |

| Flowise | No-code | Multi | Hybrid | Visual builder | N/A |

| SuperAGI | Automation | Cloud | Cloud | Autonomous agents | N/A |

Evaluation & Scoring

| Tool | Core | Ease | Integration | Security | Performance | Support | Value | Total |

|---|---|---|---|---|---|---|---|---|

| LangChain | 10 | 7 | 9 | 7 | 9 | 9 | 9 | 8.9 |

| LlamaIndex | 9 | 8 | 8 | 7 | 8 | 8 | 8 | 8.2 |

| Haystack | 9 | 7 | 8 | 7 | 8 | 8 | 8 | 8.1 |

| Semantic Kernel | 8 | 7 | 9 | 9 | 8 | 8 | 7 | 8.1 |

| AutoGen | 9 | 7 | 8 | 7 | 9 | 8 | 8 | 8.3 |

| CrewAI | 8 | 8 | 7 | 7 | 8 | 7 | 8 | 7.8 |

| PromptFlow | 8 | 8 | 8 | 8 | 8 | 8 | 7 | 8.0 |

| DSPy | 9 | 6 | 7 | 7 | 9 | 7 | 8 | 7.9 |

| Flowise | 7 | 9 | 7 | 7 | 7 | 7 | 8 | 7.6 |

| SuperAGI | 8 | 7 | 8 | 7 | 8 | 7 | 8 | 7.8 |

Which LLM Orchestration Framework Is Right for You?

Solo / Freelancer

Flowise or CrewAI is best for simplicity and quick setup.

SMB

LangChain or LlamaIndex offers flexibility and scalability.

Mid-Market

PromptFlow or Haystack provides structured workflows.

Enterprise

Semantic Kernel or AutoGen delivers scalability and governance.

Frequently Asked Questions (FAQs)

What is an LLM orchestration framework?

An LLM orchestration framework manages how large language models interact with data, tools, and workflows. It enables developers to build complex AI systems that go beyond simple prompt-response interactions. These frameworks are essential for production-grade AI applications.

Why are orchestration frameworks important?

They allow developers to connect LLMs with APIs, databases, and external tools, enabling multi-step workflows. Without orchestration, LLMs cannot efficiently handle real-world tasks. These frameworks ensure scalability and maintainability.

What is RAG in LLM frameworks?

RAG (Retrieval-Augmented Generation) is a technique where LLMs retrieve relevant data from external sources before generating responses. This improves accuracy and ensures up-to-date information. Many orchestration frameworks support RAG pipelines.

Do these frameworks require coding?

Most frameworks are developer-focused and require coding knowledge. However, tools like Flowise provide low-code or no-code interfaces, making them accessible to non-developers.

Are LLM orchestration frameworks scalable?

Yes, these frameworks are designed for scalability and can handle large workloads. Cloud-based deployment options allow them to scale across enterprise environments.

Can they integrate with enterprise systems?

Yes, most frameworks support integration with APIs, databases, CRM systems, and cloud platforms. This enables seamless workflow automation and data processing.

Are these frameworks secure?

Security depends on deployment and configuration. Enterprise frameworks provide features like encryption, access control, and governance policies to ensure secure operations.

What industries use these frameworks?

Industries such as finance, healthcare, retail, and technology use LLM orchestration frameworks. They enable automation, analytics, and intelligent applications.

What are the limitations?

Limitations include complexity, dependency on LLM quality, and integration challenges. Proper design and monitoring are required for reliable performance.

How to choose the right framework?

Choose based on your use case, technical expertise, scalability needs, and integration requirements. Testing frameworks with real workflows helps identify the best fit.

Conclusion

LLM orchestration frameworks are a critical layer in modern AI systems, enabling developers to build scalable, intelligent applications that go beyond simple text generation. Tools like LangChain and LlamaIndex provide flexibility and strong integration capabilities for developers, while frameworks like CrewAI and Flowise simplify orchestration for smaller teams and rapid prototyping. Mid-market users benefit from structured solutions like Haystack and PromptFlow, which offer balance between usability and performance. Enterprises can leverage platforms like Semantic Kernel and AutoGen for large-scale, secure, and production-ready deployments. Choosing the right framework depends on your application complexity, team expertise, and integration needs. A practical approach is to experiment with a few frameworks, evaluate performance, and select the one that best aligns with your AI architecture and business goals.