Introduction

Experiment tracking tools are essential components of modern machine learning workflows. They help teams log, organize, compare, and reproduce experiments by capturing parameters, metrics, datasets, and model artifacts. In machine learning development, experimentation is iterative and complex, making it critical to track changes and results systematically.

Without proper experiment tracking, teams struggle with reproducibility, collaboration, and model optimization. These tools provide a centralized system to manage experiments, enabling faster development cycles and better decision-making.

Real-world use cases include:

- Tracking hyperparameter tuning experiments

- Comparing model performance across iterations

- Managing datasets and feature versions

- Collaborating across data science teams

- Reproducing experiments for compliance and audits

Key evaluation criteria for buyers:

- Experiment logging and metadata tracking

- Visualization of metrics and comparisons

- Integration with ML frameworks and pipelines

- Versioning of models, datasets, and code

- Collaboration and team workflows

- Scalability for large experiments

- Security and governance features

- Deployment flexibility (cloud/on-prem/hybrid)

- Ease of use and developer experience

- Cost and operational overhead

Best for:

Experiment tracking tools are ideal for data scientists, ML engineers, and AI teams working on iterative model development and experimentation.

Not ideal for:

Teams with minimal experimentation or simple analytics workflows may not need dedicated tracking tools.

Key Trends in Experiment Tracking Tools

- Integration with MLOps platforms for end-to-end ML lifecycle

- Real-time experiment logging and visualization

- Collaboration features for distributed teams

- Cloud-native tracking tools with scalable storage

- Automated experiment comparison and analysis

- Support for multiple ML frameworks and languages

- Versioning for datasets, models, and pipelines

- Integration with CI/CD pipelines

- Enhanced visualization dashboards

- Security and compliance for enterprise workflows

How We Selected These Tools (Methodology)

- Evaluated experiment tracking and logging capabilities

- Assessed visualization and comparison features

- Reviewed integration with ML frameworks (TensorFlow, PyTorch, etc.)

- Checked scalability for large-scale experiments

- Considered collaboration and workflow features

- Examined security, governance, and compliance features

- Evaluated ease of use and developer experience

- Reviewed community support and documentation

- Considered open-source vs enterprise solutions

- Ensured applicability across SMB, mid-market, and enterprise use cases

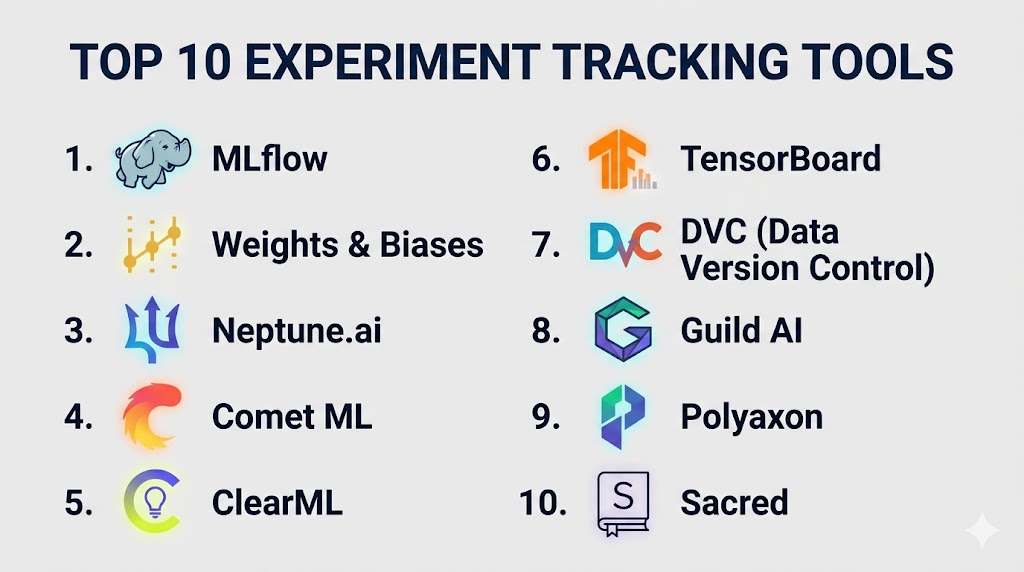

Top 10 Experiment Tracking Tools

#1 — MLflow

Short description (3-4 lines): MLflow is an open-source platform for managing the ML lifecycle, including experiment tracking, model packaging, and deployment.

Key Features

- Experiment logging and tracking

- Model registry and versioning

- REST API deployment support

- Integration with multiple ML frameworks

- Parameter and metric tracking

- Artifact storage

Pros

- Open-source and flexible

- Strong ecosystem support

Cons

- Requires setup and infrastructure

- Limited built-in visualization

Platforms / Deployment

- Linux / Windows / macOS

- Cloud / On-prem / Hybrid

Security & Compliance

- Depends on deployment

- Supports authentication and access control

Integrations & Ecosystem

- TensorFlow, PyTorch, Scikit-learn, cloud platforms

Support & Community

- Large open-source community

#2 — Weights & Biases

Short description: Weights & Biases is a popular experiment tracking platform with powerful visualization and collaboration tools.

Key Features

- Real-time experiment tracking

- Hyperparameter tuning visualization

- Model comparison dashboards

- Artifact versioning

- Collaboration tools

Pros

- Excellent UI and visualization

- Easy to use

Cons

- Paid for advanced features

- Cloud-first platform

Platforms / Deployment

- Cloud / On-prem (limited)

Security & Compliance

- Encryption, RBAC

Integrations & Ecosystem

- TensorFlow, PyTorch, Keras, Hugging Face

Support & Community

- Active community

- Enterprise support

#3 — Neptune.ai

Short description: Neptune.ai is an experiment tracking tool focused on metadata logging and model monitoring.

Key Features

- Experiment metadata tracking

- Model monitoring integration

- Visualization dashboards

- Version control for experiments

- API-based logging

Pros

- Flexible logging system

- Strong integrations

Cons

- Requires setup for advanced workflows

- UI less intuitive than competitors

Platforms / Deployment

- Cloud / On-prem

Security & Compliance

- RBAC, encryption

Integrations & Ecosystem

- TensorFlow, PyTorch, MLflow

Support & Community

- Active community

#4 — Comet ML

Short description: Comet ML is a cloud-based experiment tracking platform offering model monitoring and collaboration.

Key Features

- Experiment tracking and logging

- Model comparison dashboards

- Hyperparameter optimization

- Collaboration features

- Model registry

Pros

- Strong visualization tools

- Easy integration

Cons

- Paid plans required

- Cloud dependency

Platforms / Deployment

- Cloud / Hybrid

Security & Compliance

- Encryption, RBAC

Integrations & Ecosystem

- ML frameworks, cloud services

Support & Community

- Enterprise support

#5 — ClearML

Short description: ClearML is an open-source MLOps platform that includes experiment tracking and pipeline orchestration.

Key Features

- Experiment tracking

- Pipeline automation

- Model versioning

- Dataset management

- Remote execution

Pros

- Open-source and feature-rich

- End-to-end ML workflow support

Cons

- Requires setup

- UI complexity

Platforms / Deployment

- Cloud / On-prem / Hybrid

Security & Compliance

- RBAC, encryption

Integrations & Ecosystem

- TensorFlow, PyTorch, pipelines

Support & Community

- Open-source community

#6 — TensorBoard

Short description: TensorBoard is a visualization tool for TensorFlow experiments and metrics tracking.

Key Features

- Visualization of training metrics

- Graph visualization

- Model performance tracking

- Integration with TensorFlow

- Logging support

Pros

- Free and widely used

- Simple visualization

Cons

- Limited to TensorFlow ecosystem

- Not a full tracking platform

Platforms / Deployment

- Local / Cloud

Security & Compliance

- Depends on deployment

Integrations & Ecosystem

- TensorFlow ecosystem

Support & Community

- Strong community

#7 — DVC (Data Version Control)

Short description: DVC is a data and experiment versioning tool for managing datasets and ML pipelines.

Key Features

- Data versioning

- Pipeline tracking

- Integration with Git

- Experiment comparison

- Reproducibility support

Pros

- Strong version control

- Works with existing Git workflows

Cons

- Limited visualization

- CLI-heavy

Platforms / Deployment

- Linux / Windows / macOS

- Cloud / On-prem

Security & Compliance

- Depends on Git and storage

Integrations & Ecosystem

- Git, cloud storage, ML pipelines

Support & Community

- Active community

#8 — Guild AI

Short description: Guild AI is an experiment tracking tool focused on reproducibility and experiment management.

Key Features

- Experiment tracking

- Configuration management

- Reproducibility tools

- CLI-based workflows

- Integration with ML frameworks

Pros

- Lightweight

- Easy reproducibility

Cons

- Limited UI

- Smaller community

Platforms / Deployment

- Linux / Windows / macOS

Security & Compliance

- Depends on deployment

Integrations & Ecosystem

- Python ML ecosystem

Support & Community

- Community support

#9 — Polyaxon

Short description: Polyaxon is an MLOps platform with experiment tracking, orchestration, and deployment features.

Key Features

- Experiment tracking

- Pipeline orchestration

- Model deployment

- Monitoring tools

- Kubernetes-native

Pros

- End-to-end MLOps

- Scalable

Cons

- Complex setup

- Requires Kubernetes knowledge

Platforms / Deployment

- Cloud / On-prem / Hybrid

Security & Compliance

- RBAC, encryption

Integrations & Ecosystem

- Kubernetes, ML frameworks

Support & Community

- Enterprise support

#10 — Sacred

Short description: Sacred is a lightweight experiment tracking tool for managing configurations and results.

Key Features

- Experiment configuration tracking

- Logging and reproducibility

- Lightweight Python integration

- Flexible experiment setup

- Metadata tracking

Pros

- Simple and lightweight

- Easy integration

Cons

- Limited features

- Smaller ecosystem

Platforms / Deployment

- Linux / Windows / macOS

Security & Compliance

- Depends on deployment

Integrations & Ecosystem

- Python ecosystem

Support & Community

- Small community

Comparison Table

| Tool | Best For | Platform | Deployment | Standout Feature | Rating |

|---|---|---|---|---|---|

| MLflow | Open-source ML | Multi | Hybrid | Model lifecycle | N/A |

| W&B | Visualization | Cloud | Cloud | Dashboards | N/A |

| Neptune | Metadata tracking | Multi | Hybrid | Flexible logging | N/A |

| Comet | Collaboration | Cloud | Cloud | Model comparison | N/A |

| ClearML | Full pipeline | Multi | Hybrid | End-to-end ML | N/A |

| TensorBoard | TensorFlow | Multi | Local/Cloud | Visualization | N/A |

| DVC | Version control | Multi | Hybrid | Git integration | N/A |

| Guild AI | Lightweight | Multi | Local | Reproducibility | N/A |

| Polyaxon | MLOps | Multi | Hybrid | Kubernetes-native | N/A |

| Sacred | Simple tracking | Multi | Local | Lightweight setup | N/A |

Evaluation & Scoring

| Tool | Core | Ease | Integration | Security | Performance | Support | Value | Total |

|---|---|---|---|---|---|---|---|---|

| MLflow | 9 | 7 | 8 | 7 | 8 | 8 | 8 | 8.0 |

| W&B | 9 | 9 | 8 | 8 | 8 | 8 | 7 | 8.4 |

| Neptune | 8 | 8 | 8 | 7 | 8 | 7 | 7 | 7.7 |

| Comet | 8 | 8 | 8 | 7 | 8 | 7 | 7 | 7.7 |

| ClearML | 8 | 7 | 8 | 7 | 8 | 7 | 8 | 7.9 |

| TensorBoard | 7 | 8 | 6 | 6 | 7 | 7 | 8 | 7.1 |

| DVC | 8 | 6 | 8 | 7 | 7 | 7 | 8 | 7.6 |

| Guild AI | 7 | 7 | 6 | 6 | 7 | 6 | 7 | 6.9 |

| Polyaxon | 8 | 6 | 8 | 8 | 8 | 7 | 7 | 7.6 |

| Sacred | 7 | 7 | 6 | 6 | 7 | 6 | 7 | 6.9 |

Which Experiment Tracking Tool Is Right for You?

Solo / Freelancer

MLflow or Sacred is best for simple tracking and flexibility.

SMB

Weights & Biases or Neptune provides strong visualization and collaboration.

Mid-Market

ClearML or Comet ML supports team workflows and scaling.

Enterprise

Polyaxon or MLflow (with infra) provides full lifecycle tracking and scalability.

Frequently Asked Questions (FAQs)

What is an experiment tracking tool?

A tool that logs parameters, metrics, and outputs of ML experiments.

Why is experiment tracking important?

It ensures reproducibility and helps compare model performance.

Are these tools free?

Some are open-source; others offer paid enterprise plans.

Do they integrate with ML frameworks?

Yes, most support TensorFlow, PyTorch, and others.

Can they track datasets?

Some tools support dataset versioning and tracking.

Are they scalable?

Yes, especially cloud-based platforms.

Can teams collaborate?

Yes, most tools support team collaboration.

Do they support visualization?

Yes, many tools provide dashboards and graphs.

Are they secure?

Enterprise tools offer RBAC and encryption.

How to choose one?

Based on scale, budget, integrations, and team needs.

Conclusion

Experiment tracking tools are a foundational component of modern machine learning workflows, enabling teams to systematically manage, compare, and reproduce experiments. Open-source solutions like MLflow and ClearML provide flexibility and control, making them ideal for teams with strong engineering capabilities. Platforms such as Weights & Biases, Comet ML, and Neptune.ai offer powerful visualization and collaboration features, making them suitable for growing teams and organizations focused on productivity. For enterprises, tools like Polyaxon deliver scalable, production-ready capabilities integrated with broader MLOps ecosystems. Choosing the right tool depends on your workflow complexity, team size, infrastructure preferences, and budget. A practical approach is to test a few tools with real experiments, evaluate usability and integration, and then standardize across teams for consistency and efficiency in ML development.