Introduction

Model monitoring and drift detection tools are critical components of modern machine learning operations (MLOps). These tools continuously track model performance, data quality, and prediction behavior in production environments to ensure models remain accurate, reliable, and relevant over time.

Machine learning models are highly sensitive to changes in input data and real-world conditions. When these changes occur, models can experience model drift, leading to degraded performance and incorrect predictions . Monitoring tools help detect these issues early and trigger retraining or corrective actions.

Real-world use cases include:

- Detecting fraud model degradation in financial systems

- Monitoring recommendation engines for accuracy decline

- Tracking data quality issues in ETL pipelines

- Identifying bias and fairness issues in AI models

- Automating retraining triggers for production ML systems

Key evaluation criteria for buyers:

- Data drift and concept drift detection capabilities

- Real-time monitoring and alerting

- Model performance tracking (accuracy, latency, bias)

- Integration with ML pipelines and MLOps tools

- Visualization and reporting dashboards

- Scalability for high-volume data streams

- Explainability and root-cause analysis

- Security and compliance features

- Deployment flexibility (cloud/on-prem/hybrid)

- Ease of setup and operational overhead

Best for:

These tools are ideal for ML engineers, data scientists, and DevOps teams managing production ML systems.

Not ideal for:

Teams working only on experimentation or offline models without deployment may not need full monitoring platforms.

Key Trends in Model Monitoring & Drift Detection Tools

- AI observability platforms combining monitoring, logging, and debugging

- Real-time drift detection for streaming data pipelines

- Automated retraining triggers integrated with MLOps workflows

- Explainable AI (XAI) for root-cause analysis

- Cloud-native monitoring solutions for scalability

- Integration with feature stores and data pipelines

- Support for LLM monitoring and AI agents

- Advanced anomaly detection using ML models

- Data quality + model quality unified monitoring

- Governance and compliance features for regulated industries

How We Selected These Tools (Methodology)

- Evaluated drift detection accuracy and capabilities (data, feature, concept drift)

- Assessed real-time monitoring and alerting systems

- Reviewed integration with ML frameworks and pipelines

- Checked visualization and debugging tools

- Considered scalability and performance in production

- Examined security, governance, and compliance features

- Evaluated ease of use and onboarding experience

- Reviewed community support and documentation

- Considered open-source vs enterprise solutions

- Ensured relevance across SMB, mid-market, and enterprise use cases

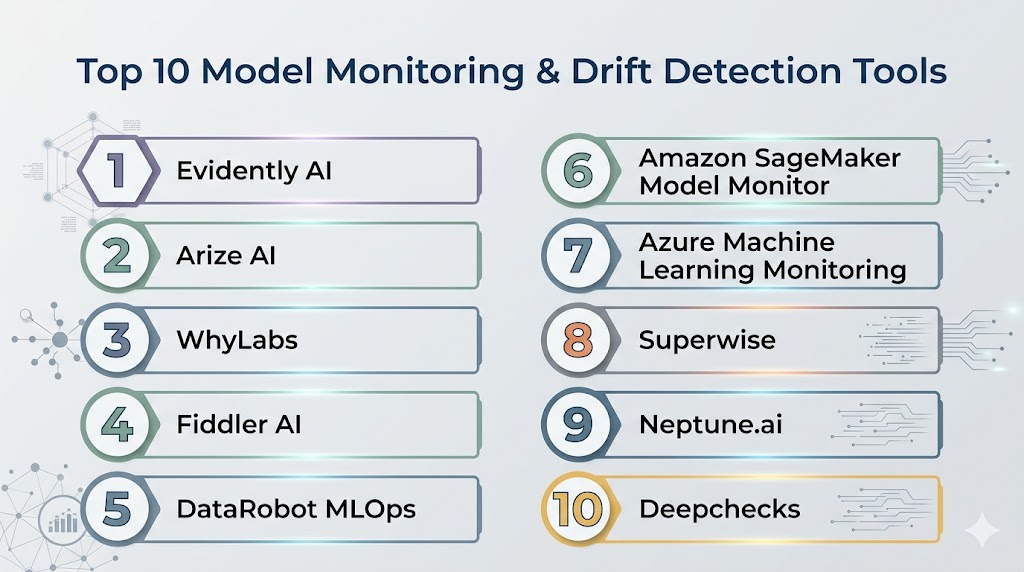

Top 10 Model Monitoring & Drift Detection Tools

#1 — Evidently AI

Short description (3-4 lines): Evidently AI is an open-source tool designed for monitoring ML models and detecting data drift with rich visual reports. It is ideal for teams looking for transparency and flexibility in monitoring.

Key Features

- Data drift detection with 20+ statistical methods

- Interactive dashboards and reports

- Model performance tracking

- Integration with Python pipelines

- Open-source and extensible

Pros

- Transparent and customizable

- Strong visualization capabilities

Cons

- Requires engineering setup

- Limited enterprise automation

Platforms / Deployment

- Linux / Windows / macOS

- Cloud / On-prem / Hybrid

Security & Compliance

- Depends on deployment

- Supports standard access control

Integrations & Ecosystem

- Python ecosystem, ML pipelines, monitoring tools

Support & Community

- Active open-source community

- Good documentation

#2 — WhyLabs

Short description: WhyLabs is a cloud-based AI observability platform focused on monitoring data quality and model performance at scale.

Key Features

- Real-time drift detection

- Data quality monitoring

- Model performance tracking

- Alerting and anomaly detection

- Scalable cloud monitoring

Pros

- Strong observability features

- Scales well for large datasets

Cons

- Cloud-first approach

- Paid enterprise model

Platforms / Deployment

- Cloud

Security & Compliance

- Encryption, RBAC

- Enterprise compliance support

Integrations & Ecosystem

- ML pipelines, cloud platforms, data warehouses

Support & Community

- Enterprise support

- Growing community

#3 — Arize AI

Short description: Arize AI is an ML observability platform providing deep insights into model performance and root-cause analysis.

Key Features

- Drift detection and monitoring

- Root-cause analysis tools

- Real-time performance tracking

- Explainability dashboards

- Integration with ML workflows

Pros

- Strong debugging capabilities

- Enterprise-grade monitoring

Cons

- Paid solution

- Requires integration effort

Platforms / Deployment

- Cloud

Security & Compliance

- Encryption, RBAC

- SOC 2, GDPR

Integrations & Ecosystem

- ML frameworks, data pipelines, cloud services

Support & Community

- Enterprise support

- Active ecosystem

#4 — Fiddler AI

Short description: Fiddler AI provides explainable AI and monitoring tools for regulated industries and enterprise AI systems.

Key Features

- Drift detection and monitoring

- Explainable AI (XAI)

- Bias and fairness monitoring

- Real-time dashboards

- Model debugging tools

Pros

- Strong compliance and explainability

- Suitable for regulated industries

Cons

- Enterprise-focused pricing

- Complex setup

Platforms / Deployment

- Cloud / On-prem / Hybrid

Security & Compliance

- RBAC, encryption

- SOC 2, GDPR

Integrations & Ecosystem

- ML frameworks, cloud platforms, BI tools

Support & Community

- Enterprise support

#5 — Monte Carlo (Data Observability)

Short description: Monte Carlo focuses on data reliability and observability, ensuring data pipelines feeding ML models remain accurate.

Key Features

- Data quality monitoring

- Pipeline observability

- Anomaly detection

- Root-cause analysis

- Integration with data warehouses

Pros

- Strong data monitoring

- Easy integration with pipelines

Cons

- Less ML-specific features

- Enterprise pricing

Platforms / Deployment

- Cloud

Security & Compliance

- Encryption, RBAC

- Compliance support

Integrations & Ecosystem

- Snowflake, BigQuery, data pipelines

Support & Community

- Enterprise support

#6 — DataRobot MLOps

Short description: DataRobot MLOps offers end-to-end monitoring, governance, and lifecycle management for ML models.

Key Features

- Automated drift detection

- Model performance monitoring

- Governance and audit logs

- CI/CD pipeline integration

- Bias and fairness tracking

Pros

- Comprehensive enterprise solution

- Strong automation

Cons

- High cost

- Vendor lock-in

Platforms / Deployment

- Cloud / On-prem / Hybrid

Security & Compliance

- SOC 2, GDPR, encryption

Integrations & Ecosystem

- ML pipelines, cloud platforms

Support & Community

- Enterprise support

#7 — Amazon SageMaker Model Monitor

Short description: SageMaker Model Monitor is a managed service for tracking model quality and detecting drift in AWS environments.

Key Features

- Data and concept drift detection

- Automated baselines

- Monitoring dashboards

- Alerting and logging

- Integration with AWS pipelines

Pros

- Fully managed

- Scales easily

Cons

- AWS-only

- Pricing based on usage

Platforms / Deployment

- Cloud

Security & Compliance

- IAM, encryption

- SOC 2, GDPR

Integrations & Ecosystem

- AWS ecosystem, ML pipelines

Support & Community

- AWS support

#8 — Azure ML Monitoring

Short description: Azure ML provides monitoring tools integrated into its ML platform for drift detection and performance tracking.

Key Features

- Drift detection tools

- Model monitoring dashboards

- CI/CD integration

- Alerting and reporting

- Data quality checks

Pros

- Strong enterprise integration

- Good automation features

Cons

- Azure-only

- Learning curve

Platforms / Deployment

- Cloud

Security & Compliance

- RBAC, encryption

- SOC 2, GDPR

Integrations & Ecosystem

- Azure ecosystem

Support & Community

- Microsoft support

#9 — Superwise

Short description: Superwise is an AI monitoring platform focused on automated alerts, drift detection, and model performance insights.

Key Features

- Real-time drift detection

- Automated alerts

- Model performance tracking

- Root-cause analysis

- Dashboard visualization

Pros

- Easy-to-use dashboards

- Strong automation

Cons

- Paid platform

- Limited open-source ecosystem

Platforms / Deployment

- Cloud

Security & Compliance

- Encryption, RBAC

Integrations & Ecosystem

- ML pipelines, cloud tools

Support & Community

- Enterprise support

#10 — Neptune.ai

Short description: Neptune.ai is an experiment tracking and monitoring tool for ML workflows with drift analysis capabilities.

Key Features

- Experiment tracking

- Metadata logging

- Model monitoring

- Integration with ML frameworks

- Visualization dashboards

Pros

- Strong experiment tracking

- Flexible integrations

Cons

- Not fully dedicated to drift detection

- Requires setup

Platforms / Deployment

- Cloud / On-prem

Security & Compliance

- RBAC, encryption

Integrations & Ecosystem

- TensorFlow, PyTorch, MLflow

Support & Community

- Active community

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Evidently AI | Open-source monitoring | Linux / Windows / macOS | Cloud / On-prem | Drift visualization | N/A |

| WhyLabs | AI observability | Cloud | Cloud | Data quality monitoring | N/A |

| Arize AI | Enterprise monitoring | Cloud | Cloud | Root-cause analysis | N/A |

| Fiddler AI | Explainable AI | Cloud / Hybrid | Hybrid | Bias & fairness monitoring | N/A |

| Monte Carlo | Data observability | Cloud | Cloud | Pipeline monitoring | N/A |

| DataRobot MLOps | Enterprise ML | Cloud / Hybrid | Hybrid | Governance & automation | N/A |

| SageMaker Model Monitor | AWS ML | Cloud | Cloud | Managed monitoring | N/A |

| Azure ML Monitoring | Azure ML | Cloud | Cloud | Drift detection pipelines | N/A |

| Superwise | Automated monitoring | Cloud | Cloud | Real-time alerts | N/A |

| Neptune.ai | Experiment tracking | Cloud / On-prem | Hybrid | Metadata tracking | N/A |

Evaluation & Scoring of Model Monitoring Tools

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| Evidently | 8 | 7 | 7 | 7 | 8 | 7 | 8 | 7.6 |

| WhyLabs | 8 | 8 | 8 | 8 | 8 | 7 | 7 | 7.8 |

| Arize AI | 9 | 8 | 8 | 8 | 9 | 8 | 7 | 8.3 |

| Fiddler | 8 | 7 | 8 | 9 | 8 | 8 | 7 | 8.0 |

| Monte Carlo | 7 | 8 | 7 | 8 | 7 | 7 | 7 | 7.4 |

| DataRobot | 9 | 8 | 8 | 9 | 9 | 8 | 7 | 8.4 |

| SageMaker | 8 | 8 | 8 | 8 | 8 | 7 | 7 | 7.8 |

| Azure ML | 8 | 8 | 8 | 8 | 8 | 7 | 7 | 7.8 |

| Superwise | 8 | 8 | 7 | 7 | 8 | 7 | 7 | 7.6 |

| Neptune | 7 | 7 | 8 | 7 | 7 | 7 | 7 | 7.2 |

Which Model Monitoring Tool Is Right for You?

Solo / Freelancer

Evidently AI or Neptune.ai is best for lightweight monitoring.

SMB

WhyLabs or Superwise provides easy cloud-based monitoring.

Mid-Market

Arize AI or Fiddler AI offers deeper observability and explainability.

Enterprise

DataRobot MLOps, Azure ML, or SageMaker provides full-scale monitoring and governance.

Frequently Asked Questions (FAQs)

What is model drift?

Model drift occurs when model performance degrades due to changes in data or relationships .

Why is model monitoring important?

It ensures models remain accurate and reliable in production environments .

What types of drift exist?

Data drift, feature drift, and concept drift.

Can drift detection be automated?

Yes, modern tools trigger alerts and retraining pipelines automatically .

Are these tools real-time?

Many support real-time or near real-time monitoring.

Do they integrate with MLOps?

Yes, most integrate with CI/CD and ML pipelines.

Are open-source tools available?

Yes, Evidently AI and MLflow-based setups.

Can they detect bias?

Yes, tools like Fiddler AI provide fairness checks.

Do they support cloud deployment?

Most modern tools are cloud-native or hybrid.

How to choose the right tool?

Based on scale, cloud preference, monitoring depth, and budget.

Conclusion

Model monitoring and drift detection tools are essential for maintaining reliable, accurate, and trustworthy machine learning systems in production. As data evolves, models inevitably degrade, making proactive monitoring critical to avoid performance loss and incorrect predictions. Open-source tools like Evidently AI offer flexibility and transparency, while platforms like Arize AI and Fiddler AI provide advanced observability and explainability. Cloud-native solutions such as SageMaker and Azure ML simplify deployment and scaling for growing teams. Enterprises benefit from comprehensive platforms like DataRobot MLOps that combine governance, automation, and monitoring. Ultimately, the right tool depends on your organization’s scale, technical expertise, and infrastructure. Start by shortlisting a few tools, testing them with real production data, and validating integration with your ML pipelines to ensure long-term success and reliability.