Introduction

AI inference serving platforms, also known as model serving platforms, are systems used to deploy, manage, optimize, and scale machine learning or generative AI models in production environments. These platforms help organizations transform trained AI models into real-time applications capable of handling predictions, conversational AI, recommendation engines, computer vision workloads, and large-scale generative AI tasks.

The category has become increasingly important as businesses move from AI experimentation into full production deployment. Modern enterprises require low-latency inference, GPU optimization, autoscaling, observability, multi-model orchestration, and enterprise-grade security controls to support growing AI workloads. The rapid growth of generative AI, multimodal applications, retrieval-augmented generation workflows, and edge AI deployments has accelerated demand for reliable model serving infrastructure.

Real-world use cases include:

- AI chatbots and virtual assistants

- Real-time recommendation engines

- Fraud detection systems

- AI-powered code generation

- Computer vision and video analytics

- Speech recognition applications

- Enterprise AI search platforms

Key buyer evaluation criteria include:

- Scalability and autoscaling

- GPU optimization capabilities

- Framework compatibility

- Latency and throughput performance

- Security and governance controls

- Monitoring and observability

- API flexibility

- Deployment flexibility

- Cost efficiency

- Ease of deployment and operations

Best for: AI engineers, MLOps teams, platform engineering teams, AI startups, SaaS companies, enterprise AI teams, fintech organizations, healthcare AI teams, and businesses deploying production AI systems at scale.

Not ideal for: Small organizations running lightweight AI workloads, teams still experimenting with AI prototypes, or businesses that only require hosted AI APIs without infrastructure management.

Key Trends in AI Inference Serving Platforms

- GPU optimization is becoming essential for reducing inference costs in large language model deployments.

- Serverless inference platforms are growing in popularity for burst workloads and flexible scaling.

- Hybrid and multi-cloud AI deployments are increasingly common for resilience and vendor flexibility.

- Quantization and model compression are helping reduce infrastructure costs while maintaining performance.

- Edge AI inference is expanding in manufacturing, healthcare, automotive, and IoT industries.

- Observability tools for AI inference are becoming standard for latency monitoring and model reliability.

- Kubernetes-native model serving continues to dominate enterprise AI infrastructure.

- AI gateways and intelligent routing layers are emerging for multi-model orchestration.

- Security and governance requirements are becoming stricter for regulated industries.

- Specialized AI accelerators beyond traditional GPUs are shaping future inference strategies.

How We Selected These Tools Methodology

The platforms in this list were selected using multiple practical and technical evaluation factors:

- Strong enterprise or developer adoption

- Proven production inference capabilities

- Broad framework compatibility

- Scalability and performance efficiency

- Security and governance readiness

- Integration ecosystem maturity

- Flexibility across cloud and self-hosted deployments

- Monitoring and operational tooling quality

- Community adoption and ecosystem momentum

- Suitability across enterprise, SMB, and developer-focused use cases

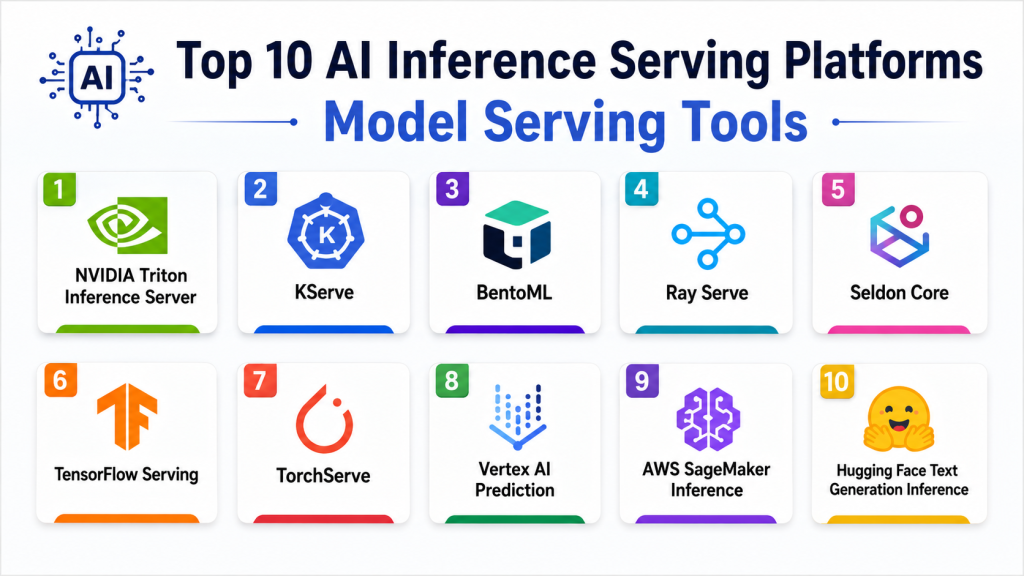

Top 10 AI Inference Serving Platforms Model Serving Tools

1- NVIDIA Triton Inference Server

Short description: NVIDIA Triton Inference Server is a high-performance inference serving platform designed for GPU-accelerated AI workloads. It supports multiple frameworks and enables scalable deployment of machine learning and generative AI models across cloud, edge, and enterprise environments. It is widely used by organizations optimizing large-scale AI infrastructure.

Key Features

- Multi-framework inference support

- Dynamic batching

- GPU acceleration optimization

- TensorRT integration

- Kubernetes deployment support

- Model repository management

- Performance monitoring tools

Pros

- Excellent GPU utilization

- Strong enterprise adoption

- High-performance inference

- Broad framework compatibility

Cons

- Can be complex for beginners

- Requires GPU infrastructure expertise

- Advanced tuning may take time

- Less optimized for CPU-only deployments

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

RBAC support, encryption compatibility, audit logging integration. Additional certifications not publicly stated.

Integrations & Ecosystem

NVIDIA Triton integrates deeply with enterprise AI infrastructure and GPU-centric deployment environments.

- Kubernetes

- TensorRT

- PyTorch

- TensorFlow

- ONNX Runtime

- Prometheus

- NVIDIA AI Enterprise

Support & Community

Strong enterprise support ecosystem with extensive documentation and active developer adoption.

2- KServe

Short description: KServe is a Kubernetes-native inference serving platform designed for scalable machine learning deployments. It enables serverless inference, autoscaling, and production AI serving for organizations standardizing AI operations on Kubernetes infrastructure.

Key Features

- Kubernetes-native serving

- Serverless inference

- Autoscaling support

- Multi-framework compatibility

- Canary deployment support

- Explainability capabilities

- GPU scheduling

Pros

- Strong cloud-native architecture

- Flexible deployment patterns

- Large open-source ecosystem

- Good scalability for enterprise AI

Cons

- Requires Kubernetes expertise

- Operational complexity for smaller teams

- Limited built-in UI experience

- Initial setup can be difficult

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

Kubernetes RBAC integration, authentication support, encryption compatibility. Additional compliance varies by deployment.

Integrations & Ecosystem

KServe works well within cloud-native AI infrastructure and MLOps pipelines.

- Kubeflow

- Istio

- Knative

- Prometheus

- MLflow

- TensorFlow Serving

- Seldon Core

Support & Community

Large open-source community with growing enterprise adoption and strong Kubernetes ecosystem support.

3- BentoML

Short description: BentoML is a developer-focused AI serving platform that simplifies model deployment and production inference. It allows teams to package, deploy, and scale machine learning and generative AI applications using API-first workflows and production-ready infrastructure.

Key Features

- API-first model serving

- LLM deployment support

- Containerized packaging

- Multi-framework support

- Autoscaling capabilities

- GPU optimization

- CI/CD integration support

Pros

- Developer-friendly workflows

- Fast deployment process

- Strong generative AI support

- Flexible deployment options

Cons

- Smaller enterprise ecosystem

- Governance features still evolving

- Limited advanced operational tooling

- Smaller community compared to larger projects

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

Authentication support, API security controls, container security compatibility. Additional certifications not publicly stated.

Integrations & Ecosystem

BentoML integrates with modern AI application development stacks and deployment pipelines.

- Docker

- Kubernetes

- Hugging Face

- MLflow

- LangChain

- PyTorch

- OpenAI-compatible APIs

Support & Community

Growing developer community with strong documentation and increasing enterprise interest.

4- Ray Serve

Short description: Ray Serve is a scalable inference serving framework built on the Ray distributed computing ecosystem. It is designed for distributed AI inference workloads, large-scale machine learning systems, and advanced generative AI applications.

Key Features

- Distributed inference serving

- Python-native architecture

- LLM deployment support

- Autoscaling and load balancing

- DAG-based orchestration

- Streaming inference

- Multi-model serving

Pros

- Excellent distributed scalability

- Strong orchestration flexibility

- Good fit for advanced AI systems

- Efficient resource utilization

Cons

- Requires engineering expertise

- Operational complexity can increase quickly

- Smaller enterprise governance layer

- Learning curve for infrastructure teams

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

Authentication support and infrastructure-level security compatibility. Additional compliance depends on deployment architecture.

Integrations & Ecosystem

Ray Serve integrates with distributed AI workflows and Python-centric AI ecosystems.

- Ray

- Kubernetes

- PyTorch

- TensorFlow

- Hugging Face

- FastAPI

- Anyscale

Support & Community

Strong open-source momentum with growing adoption among AI infrastructure teams.

5- Seldon Core

Short description: Seldon Core is an open-source inference serving and MLOps platform designed for Kubernetes-based AI deployments. It provides scalable model deployment, monitoring, orchestration, and operational management capabilities for enterprise AI environments.

Key Features

- Kubernetes-native deployment

- Model monitoring

- Canary deployment support

- Explainability features

- Multi-framework serving

- Inference graph orchestration

- Drift monitoring

Pros

- Strong enterprise governance features

- Mature Kubernetes integration

- Flexible deployment patterns

- Good observability support

Cons

- Requires Kubernetes expertise

- Operational overhead for smaller teams

- Technical learning curve

- UI experience can feel complex

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

RBAC support, audit capabilities, Kubernetes security integration. Additional certifications vary by deployment.

Integrations & Ecosystem

Seldon Core integrates with enterprise MLOps and Kubernetes-based AI infrastructure.

- Kubeflow

- Prometheus

- Grafana

- MLflow

- Istio

- Kafka

- TensorFlow

Support & Community

Active open-source ecosystem with commercial enterprise support availability.

6- TensorFlow Serving

Short description: TensorFlow Serving is a production-grade serving system optimized for TensorFlow models. It enables scalable deployment and efficient inference serving for machine learning workloads in enterprise and production environments.

Key Features

- TensorFlow optimization

- High-performance inference

- Model versioning

- REST and gRPC APIs

- Batch inference support

- Hot-swapping model updates

- Scalable serving architecture

Pros

- Mature production reliability

- Excellent TensorFlow integration

- Lightweight serving system

- Strong ecosystem support

Cons

- Primarily optimized for TensorFlow

- Less flexible than newer platforms

- Limited modern LLM tooling

- Requires infrastructure management

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

Encryption compatibility and API security support. Additional certifications not publicly stated.

Integrations & Ecosystem

TensorFlow Serving integrates naturally with TensorFlow-centric machine learning pipelines.

- TensorFlow

- Kubernetes

- Docker

- Prometheus

- gRPC

- Google Cloud

- TFX

Support & Community

Broad adoption within TensorFlow ecosystems and strong documentation resources.

7- TorchServe

Short description: TorchServe is an open-source serving framework designed specifically for PyTorch models. It simplifies deployment and management of PyTorch-based AI applications while supporting scalable inference APIs and monitoring capabilities.

Key Features

- PyTorch-native serving

- REST and gRPC APIs

- Model versioning

- Batch inference

- Logging and metrics

- GPU acceleration

- Multi-model management

Pros

- Strong PyTorch integration

- Lightweight serving workflows

- Easy deployment process

- Good performance for PyTorch workloads

Cons

- Limited outside PyTorch ecosystem

- Basic operational tooling

- Smaller feature set than enterprise competitors

- Governance features are limited

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

API security support and encryption compatibility. Additional certifications not publicly stated.

Integrations & Ecosystem

TorchServe integrates well with PyTorch deployment workflows and AI infrastructure tooling.

- PyTorch

- Kubernetes

- Prometheus

- Grafana

- Docker

- AWS

- NVIDIA GPUs

Support & Community

Supported by the PyTorch ecosystem with strong open-source community engagement.

8- Vertex AI Prediction

Short description: Vertex AI Prediction is a managed AI inference platform that provides scalable deployment infrastructure for machine learning and generative AI applications. It helps organizations deploy AI models with reduced operational complexity and integrated cloud tooling.

Key Features

- Managed model serving

- Autoscaling infrastructure

- Generative AI support

- GPU and TPU support

- Endpoint monitoring

- Multi-model deployment

- Integrated MLOps workflows

Pros

- Reduced infrastructure management

- Strong cloud scalability

- Integrated AI ecosystem

- Enterprise-grade operations

Cons

- Vendor lock-in concerns

- Cloud costs may increase rapidly

- Less infrastructure customization

- Best suited for cloud-native environments

Platforms / Deployment

Cloud

Security & Compliance

IAM integration, encryption support, audit logging, enterprise cloud security controls. Additional compliance depends on deployment configuration.

Integrations & Ecosystem

Vertex AI Prediction integrates deeply with cloud-native AI and analytics services.

- BigQuery

- Kubernetes

- TensorFlow

- Vertex AI Pipelines

- Cloud Storage

- Monitoring tools

- Generative AI APIs

Support & Community

Strong enterprise documentation and managed cloud support experience.

9- AWS SageMaker Inference

Short description: AWS SageMaker Inference is a managed AI serving platform for deploying machine learning models at scale. It supports real-time, asynchronous, and serverless inference patterns across enterprise AI workloads.

Key Features

- Managed inference endpoints

- Serverless inference

- Multi-model endpoints

- Autoscaling support

- Real-time monitoring

- GPU acceleration

- Integrated MLOps workflows

Pros

- Broad cloud ecosystem integration

- Flexible inference deployment modes

- Enterprise scalability

- Strong operational tooling

Cons

- Can become expensive at scale

- AWS learning curve

- Vendor lock-in risks

- Infrastructure complexity for beginners

Platforms / Deployment

Cloud

Security & Compliance

IAM integration, encryption support, audit logging, VPC support, enterprise cloud security controls.

Integrations & Ecosystem

AWS SageMaker integrates with a large range of cloud infrastructure and AI services.

- Amazon EKS

- AWS Lambda

- S3

- CloudWatch

- Hugging Face

- MLflow

- Bedrock

Support & Community

Extensive enterprise ecosystem with strong partner and documentation support.

10- Hugging Face Text Generation Inference

Short description: Hugging Face Text Generation Inference is a specialized serving platform optimized for large language models and generative AI workloads. It focuses on efficient transformer inference and scalable deployment for modern AI applications.

Key Features

- Transformer optimization

- LLM-focused serving

- Tensor parallelism

- Continuous batching

- Streaming token generation

- Quantization support

- OpenAI-compatible APIs

Pros

- Excellent LLM optimization

- Strong generative AI ecosystem

- Developer-friendly APIs

- Active open-source adoption

Cons

- Primarily focused on LLM workloads

- Narrower scope than broader serving platforms

- Enterprise tooling still maturing

- Infrastructure tuning may be required

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

Authentication support and infrastructure-level security compatibility. Additional certifications not publicly stated.

Integrations & Ecosystem

The platform integrates naturally with transformer-based AI ecosystems and generative AI workflows.

- Hugging Face Hub

- Transformers

- Kubernetes

- LangChain

- PyTorch

- OpenAI-compatible clients

- NVIDIA GPUs

Support & Community

Large open-source ecosystem with strong developer community momentum.

Comparison Table Top 10

| Tool Name | Best For | Platforms Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| NVIDIA Triton | GPU-intensive enterprise AI | Linux / Cloud | Hybrid | GPU optimization | N/A |

| KServe | Kubernetes-native serving | Cloud / Linux | Hybrid | Serverless inference | N/A |

| BentoML | Developer-focused deployment | Cloud / Linux / macOS | Hybrid | API-first workflows | N/A |

| Ray Serve | Distributed AI serving | Cloud / Linux | Hybrid | Distributed orchestration | N/A |

| Seldon Core | Enterprise MLOps | Cloud / Linux | Hybrid | Inference orchestration | N/A |

| TensorFlow Serving | TensorFlow production workloads | Linux / Cloud | Hybrid | TensorFlow optimization | N/A |

| TorchServe | PyTorch deployments | Linux / Cloud | Hybrid | PyTorch-native serving | N/A |

| Vertex AI Prediction | Managed enterprise AI | Cloud | Cloud | Managed scalability | N/A |

| AWS SageMaker Inference | Cloud-native enterprise AI | Cloud | Cloud | Flexible inference modes | N/A |

| Hugging Face TGI | Generative AI inference | Cloud / Linux | Hybrid | LLM optimization | N/A |

Evaluation & Scoring of AI Inference Serving Platforms Model Serving

| Tool Name | Core 25% | Ease 15% | Integrations 15% | Security 10% | Performance 10% | Support 10% | Value 15% | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| NVIDIA Triton | 9.6 | 7.4 | 9.2 | 8.8 | 9.7 | 8.9 | 8.1 | 8.9 |

| KServe | 9.0 | 7.1 | 8.8 | 8.5 | 8.9 | 8.1 | 8.7 | 8.5 |

| BentoML | 8.5 | 8.9 | 8.3 | 7.8 | 8.4 | 8.0 | 8.8 | 8.4 |

| Ray Serve | 9.1 | 7.0 | 8.5 | 7.9 | 9.3 | 8.1 | 8.4 | 8.4 |

| Seldon Core | 8.8 | 7.2 | 8.7 | 8.8 | 8.6 | 8.0 | 8.1 | 8.3 |

| TensorFlow Serving | 8.4 | 7.5 | 7.8 | 7.9 | 8.8 | 8.5 | 8.9 | 8.2 |

| TorchServe | 8.0 | 8.2 | 7.7 | 7.4 | 8.2 | 7.8 | 8.6 | 8.0 |

| Vertex AI Prediction | 9.0 | 8.8 | 8.9 | 9.2 | 9.0 | 8.9 | 7.6 | 8.7 |

| AWS SageMaker Inference | 9.1 | 8.0 | 9.4 | 9.3 | 9.1 | 8.8 | 7.5 | 8.8 |

| Hugging Face TGI | 8.9 | 8.4 | 8.5 | 7.5 | 9.1 | 8.4 | 8.7 | 8.5 |

These scores are comparative and intended to help buyers evaluate strengths across different deployment scenarios. Higher scores do not automatically mean a platform is universally better. Some platforms prioritize enterprise governance and scalability, while others focus on developer simplicity or distributed AI flexibility. Buyers should compare infrastructure requirements, operational complexity, deployment strategy, and long-term scalability before selecting a platform.

Which AI Inference Serving Platforms Model Serving Tool Is Right for You?

Solo / Freelancer

Individual developers and AI freelancers often benefit from lightweight deployment workflows and reduced infrastructure complexity. BentoML and Hugging Face Text Generation Inference are strong options for rapid experimentation and fast deployment.

SMB

Small and medium-sized businesses usually prioritize ease of deployment, operational simplicity, and scalability. Vertex AI Prediction and AWS SageMaker Inference provide managed infrastructure that reduces operational burden.

Mid-Market

Mid-market organizations often require better scalability, monitoring, and governance capabilities. KServe, Ray Serve, and Seldon Core provide flexible Kubernetes-native infrastructure for growing AI operations.

Enterprise

Large enterprises typically prioritize performance optimization, governance, scalability, and security. NVIDIA Triton, AWS SageMaker Inference, and Vertex AI Prediction are commonly suitable for enterprise-scale AI environments.

Budget vs Premium

Open-source tools like KServe, Ray Serve, and BentoML can reduce licensing costs but may require stronger engineering capabilities. Managed cloud platforms reduce operational effort but can increase long-term infrastructure expenses.

Feature Depth vs Ease of Use

Advanced enterprise platforms usually provide stronger observability, governance, and optimization capabilities but require more technical expertise. Developer-focused platforms simplify onboarding but may lack advanced enterprise operational tooling.

Integrations & Scalability

Organizations heavily invested in cloud ecosystems often benefit from native integrations with AWS or Google Cloud services. Kubernetes-centric organizations may prefer portable platforms like KServe or Seldon Core.

Security & Compliance Needs

Regulated industries should prioritize platforms with strong IAM controls, encryption support, audit logging, and governance capabilities. Managed cloud environments often provide stronger built-in compliance tooling.

Frequently Asked Questions FAQs

1. What is an AI inference serving platform?

An AI inference serving platform is infrastructure used to deploy trained machine learning or generative AI models into production environments. These platforms manage prediction requests, scaling, monitoring, and optimization for real-world AI applications.

2. Why is inference optimization important?

Inference optimization improves latency, throughput, and infrastructure efficiency. Proper optimization reduces operational costs while improving user experience for AI-powered applications.

3. Are open-source model serving platforms suitable for enterprises?

Yes, many enterprises successfully use open-source serving platforms like KServe and NVIDIA Triton. However, these solutions typically require stronger platform engineering expertise.

4. What is the difference between training and inference?

Training involves building and improving AI models using datasets. Inference focuses on using trained models to generate predictions or responses in production systems.

5. Which deployment model is best for generative AI workloads?

Hybrid and cloud deployments are common for generative AI because they support scalable GPU infrastructure and flexible resource allocation.

6. What are common mistakes when deploying inference infrastructure?

Common mistakes include poor autoscaling configuration, underestimating GPU costs, ignoring observability, and choosing platforms that do not match workload complexity.

7. How important is Kubernetes for AI model serving?

Kubernetes has become a standard foundation for scalable AI infrastructure because it provides orchestration, autoscaling, and deployment flexibility.

8. Can inference serving platforms support multiple models at once?

Yes, many modern inference platforms support multi-model serving, intelligent routing, and orchestration across multiple AI workloads.

9. What integrations are most important for AI serving platforms?

Important integrations include Kubernetes, monitoring platforms, model registries, CI/CD pipelines, cloud storage, and API gateways.

10. How difficult is migration between serving platforms?

Migration complexity depends on deployment architecture, APIs, infrastructure dependencies, and orchestration design. Open standards and Kubernetes-native tools can reduce migration challenges.

Conclusion

AI inference serving platforms have become a critical foundation for organizations deploying production-grade machine learning and generative AI applications. The right platform depends on infrastructure maturity, operational expertise, scalability requirements, deployment flexibility, and security expectations. Enterprise organizations often prioritize performance optimization, governance, and reliability, while smaller teams may focus more on deployment simplicity and cost efficiency. Open-source platforms continue to evolve rapidly, but managed cloud services remain attractive for teams looking to reduce operational complexity. There is no single universal solution for every AI workload or deployment strategy. The best approach is to shortlist a few platforms that align with your architecture goals, run pilot deployments, validate performance and integration requirements, and measure operational costs before making a long-term infrastructure decision.